.

Normal distribution

In probability theory, the normal (or Gaussian) distribution is a continuous probability distribution that has a bell-shaped probability density function, known as the Gaussian function or informally the bell curve:[nb 1]

\( f(x;\mu,\sigma^2) = \frac{1}{\sigma\sqrt{2\pi}} e^{ -\frac{1}{2}\left(\frac{x-\mu}{\sigma}\right)^2 } \)

The parameter μ is the mean or expectation (location of the peak) and σ 2 is the variance. σ is known as the standard deviation. The distribution with μ = 0 and σ 2 = 1 is called the standard normal distribution or the unit normal distribution. A normal distribution is often used as a first approximation to describe real-valued random variables that cluster around a single mean value.

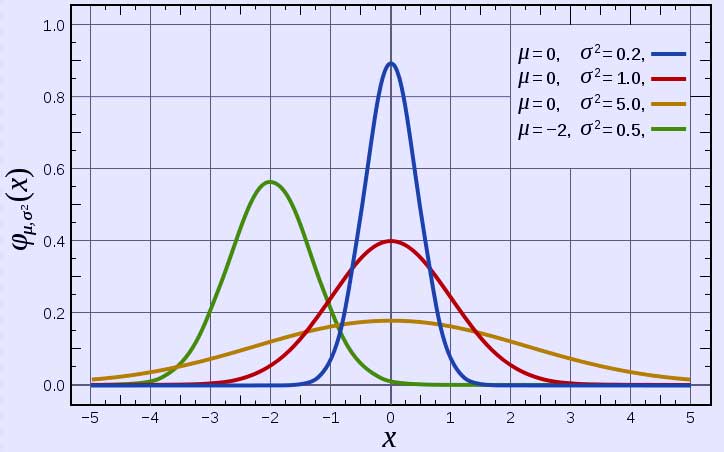

Probability density function

The red curve is the standard normal distribution

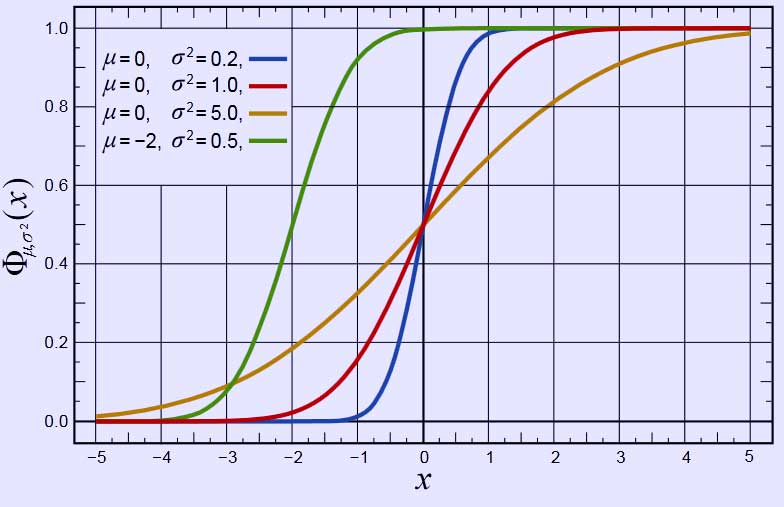

Cumulative distribution function

The normal distribution is considered the most prominent probability distribution in statistics. There are several reasons for this:[1] First, the normal distribution arises from the central limit theorem, which states that under mild conditions the sum of a large number of random variables drawn from the same distribution is distributed approximately normally, irrespective of the form of the original distribution. This gives it exceptionally wide application in, for example, sampling. Secondly, the normal distribution is very tractable analytically, that is, a large number of results involving this distribution can be derived in explicit form.

For these reasons, the normal distribution is commonly encountered in practice, and is used throughout statistics, natural sciences, and social sciences[2] as a simple model for complex phenomena. For example, the observational error in an experiment is usually assumed to follow a normal distribution, and the propagation of uncertainty is computed using this assumption. Note that a normally-distributed variable has a symmetric distribution about its mean. Quantities that grow exponentially, such as prices, incomes or populations, are often skewed to the right, and hence may be better described by other distributions, such as the log-normal distribution or Pareto distribution. In addition, the probability of seeing a normally-distributed value that is far (i.e. more than a few standard deviations) from the mean drops off extremely rapidly. As a result, statistical inference using a normal distribution is not robust to the presence of outliers (data that is unexpectedly far from the mean, due to exceptional circumstances, observational error, etc.). When outliers are expected, data may be better described using a heavy-tailed distribution such as the Student's t-distribution.

From a technical perspective, alternative characterizations are possible, for example:

The normal distribution is the only absolutely continuous distribution all of whose cumulants beyond the first two (i.e. other than the mean and variance) are zero.

For a given mean and variance, the corresponding normal distribution is the continuous distribution with the maximum entropy.[3][4]

The normal distributions are a sub-class of the elliptical distributions.

Definition

The simplest case of a normal distribution is known as the standard normal distribution, described by the probability density function

\( \phi(x) = \frac{1}{\sqrt{2\pi}}\, e^{- \frac{\scriptscriptstyle 1}{\scriptscriptstyle 2} x^2}. \)

The factor \( \scriptstyle\ 1/\sqrt{2\pi} \) in this expression ensures that the total area under the curve ϕ(x) is equal to one,[proof] and 12 in the exponent makes the "width" of the curve (measured as half the distance between the inflection points) also equal to one. It is traditional in statistics to denote this function with the Greek letter ϕ (phi), whereas density functions for all other distributions are usually denoted with letters f or p.[5] The alternative glyph φ is also used quite often, however within this article "φ" is reserved to denote characteristic functions.

Every normal distribution is the result of exponentiating a quadratic function (just as an exponential distribution results from exponentiating a linear function):

\( f(x) = e^{a x^2 + b x + c}. \, \)

This yields the classic "bell curve" shape, provided that a < 0 so that the quadratic function is concave. f(x) > 0 everywhere. One can adjust a to control the "width" of the bell, then adjust b to move the central peak of the bell along the x-axis, and finally one must choose c such that \scriptstyle\int_{-\infty}^\infty f(x)\,dx\ =\ 1 (which is only possible when a < 0).

Rather than using a, b, and c, it is far more common to describe a normal distribution by its mean μ = − b2a and variance σ2 = − 12a. Changing to these new parameters allows one to rewrite the probability density function in a convenient standard form,

\( f(x) = \frac{1}{\sqrt{2\pi\sigma^2}}\, e^{\frac{-(x-\mu)^2}{2\sigma^2}} = \frac{1}{\sigma}\, \phi\!\left(\frac{x-\mu}{\sigma}\right). \)

For a standard normal distribution, μ = 0 and σ2 = 1. The last part of the equation above shows that any other normal distribution can be regarded as a version of the standard normal distribution that has been stretched horizontally by a factor σ and then translated rightward by a distance μ. Thus, μ specifies the position of the bell curve's central peak, and σ specifies the "width" of the bell curve.

The parameter μ is at the same time the mean, the median and the mode of the normal distribution. The parameter σ2 is called the variance; as for any random variable, it describes how concentrated the distribution is around its mean. The square root of σ2 is called the standard deviation and is the width of the density function.

The normal distribution is usually denoted by N(μ, σ2).[6] Thus when a random variable X is distributed normally with mean μ and variance σ2, we write

\( X\ \sim\ \mathcal{N}(\mu,\,\sigma^2). \, \)

Alternative formulations

Some authors advocate using the precision instead of the variance. The precision is normally defined as the reciprocal of the variance (τ = σ−2), although it is occasionally defined as the reciprocal of the standard deviation (τ = σ−1).[7] This parametrization has an advantage in numerical applications where σ2 is very close to zero and is more convenient to work with in analysis as τ is a natural parameter of the normal distribution. This parametrization is common in Bayesian statistics, as it simplifies the Bayesian analysis of the normal distribution. Another advantage of using this parametrization is in the study of conditional distributions in the multivariate normal case. The form of the normal distribution with the more common definition τ = σ−2 is as follows:

\( f(x;\,\mu,\tau) = \sqrt{\frac{\tau}{2\pi}}\, e^{\frac{-\tau(x-\mu)^2}{2}}. \)

The question of which normal distribution should be called the "standard" one is also answered differently by various authors. Starting from the works of Gauss the standard normal was considered to be the one with variance σ2 = 12 :

\( f(x) = \frac{1}{\sqrt\pi}\,e^{-x^2} \)

Stigler (1982) goes even further and insists the standard normal to be with the variance σ2 = 12π :

\( f(x) = e^{-\pi x^2} \)

According to the author, this formulation is advantageous because of a much simpler and easier-to-remember formula, the fact that the pdf has unit height at zero, and simple approximate formulas for the quantiles of the distribution.

Characterization

In the previous section the normal distribution was defined by specifying its probability density function. However there are other ways to characterize a probability distribution. They include: the cumulative distribution function, the moments, the cumulants, the characteristic function, the moment-generating function, etc.

Probability density function

The probability density function (pdf) of a random variable describes the relative frequencies of different values for that random variable. The pdf of the normal distribution is given by the formula explained in detail in the previous section:

\( f(x;\,\mu,\sigma^2) = \frac{1}{\sqrt{2\pi\sigma^2}} \, e^{-(x-\mu)^2\!/(2\sigma^2)} = \frac{1}{\sigma} \,\phi\!\left(\frac{x-\mu}{\sigma}\right), \qquad x\in\mathbb{R}. \)

This is a proper function only when the variance σ2 is not equal to zero. In that case this is a continuous smooth function, defined on the entire real line, and which is called the "Gaussian function".

Properties:

- Function f(x) is unimodal and symmetric around the point x = μ, which is at the same time the mode, the median and the mean of the distribution.[8]

- The inflection points of the curve occur one standard deviation away from the mean (i.e., at x = μ − σ and x = μ + σ).[8]

- Function f(x) is log-concave.[8]

- The standard normal density ϕ(x) is an eigenfunction of the Fourier transform in that if ƒ is a normalized Gaussian function with variance σ2, centered at zero, then its Fourier transform is a Gaussian function with variance 1/σ2.

- The function is supersmooth of order 2, implying that it is infinitely differentiable.[9]

- The first derivative of ϕ(x) is ϕ′(x) = −x·ϕ(x); the second derivative is ϕ′′(x) = (x2 − 1)ϕ(x). More generally, the n-th derivative is given by ϕ(n)(x) = (−1)nHn(x)ϕ(x), where Hn is the Hermite polynomial of order n.[10]

When σ2 = 0, the density function doesn't exist. However a generalized function that defines a measure on the real line, and it can be used to calculate, for example, expected value is

\( f(x;\,\mu,0) = \delta(x-\mu). \)

where δ(x) is the Dirac delta function which is equal to infinity at x = μ and is zero elsewhere.

Cumulative distribution function

The cumulative distribution function (CDF) describes probability of a random variable falling in the interval (−∞, x].

The CDF of the standard normal distribution is denoted with the capital Greek letter Φ (phi), and can be computed as an integral of the probability density function:

\( \Phi(x) = \frac{1}{\sqrt{2\pi}} \int_{-\infty}^x e^{-t^2/2} \, dt = \frac12\left[\, 1 + \operatorname{erf}\left(\frac{x}{\sqrt{2}}\right)\,\right],\quad x\in\mathbb{R}. \)

This integral cannot be expressed in terms of elementary functions, so is simply called a transformation of the error function, or erf, a special function. Numerical methods for calculation of the standard normal CDF are discussed below. For a generic normal random variable with mean μ and variance σ2 > 0 the CDF will be equal to

\( F(x;\,\mu,\sigma^2) = \Phi\left(\frac{x-\mu}{\sigma}\right) = \frac12\left[\, 1 + \operatorname{erf}\left(\frac{x-\mu}{\sigma\sqrt{2}}\right)\,\right],\quad x\in\mathbb{R}. \)

The complement of the standard normal CDF, Q(x) = 1 − Φ(x), is referred to as the Q-function, especially in engineering texts.[11][12] This represents the upper tail probability of the Gaussian distribution: that is, the probability that a standard normal random variable X is greater than the number x. Other definitions of the Q-function, all of which are simple transformations of Φ, are also used occasionally.[13]

Properties:

The standard normal CDF is 2-fold rotationally symmetric around point (0, ½): Φ(−x) = 1 − Φ(x).

The derivative of Φ(x) is equal to the standard normal pdf ϕ(x): Φ′(x) = ϕ(x).

The antiderivative of Φ(x) is: ∫ Φ(x) dx = x Φ(x) + ϕ(x).

For a normal distribution with zero variance, the CDF is the Heaviside step function (with H(0) = 1 convention):

\( F(x;\,\mu,0) = \mathbf{1}\{x\geq\mu\}\,. \)

Quantile function

The quantile function of a distribution is the inverse of the CDF. The quantile function of the standard normal distribution is called the probit function, and can be expressed in terms of the inverse error function:

\( \Phi^{-1}(p) \equiv z_p = \sqrt2\;\operatorname{erf}^{-1}(2p - 1), \quad p\in(0,1). \)

Quantiles of the standard normal distribution are commonly denoted as zp. The quantile zp represents such a value that a standard normal random variable X has the probability of exactly p to fall inside the (−∞, zp] interval. The quantiles are used in hypothesis testing, construction of confidence intervals and Q-Q plots. The most "famous" normal quantile is 1.96 = z0.975. A standard normal random variable is greater than 1.96 in absolute value in 5% of cases.

For a normal random variable with mean μ and variance σ2, the quantile function is

\( F^{-1}(p;\,\mu,\sigma^2) = \mu + \sigma\Phi^{-1}(p) = \mu + \sigma\sqrt2\,\operatorname{erf}^{-1}(2p - 1), \quad p\in(0,1). \)

Characteristic function and moment generating function

The characteristic function φX(t) of a random variable X is defined as the expected value of eitX, where i is the imaginary unit, and t ∈ R is the argument of the characteristic function. Thus the characteristic function is the Fourier transform of the density ϕ(x). For a normally distributed X with mean μ and variance σ2, the characteristic function is [14]

\( \varphi(t;\,\mu,\sigma^2) = \int_{-\infty}^\infty\! e^{itx}\frac{1}{\sqrt{2\pi\sigma^2}}e^{-\frac12 (x-\mu)^2/\sigma^2} dx = e^{i\mu t - \frac12 \sigma^2t^2} . \)

The characteristic function can be analytically extended to the entire complex plane: one defines φ(z) = eiμz − 12σ2z2 for all z ∈ C.[15]

The moment generating function is defined as the expected value of etX. For a normal distribution, the moment generating function exists and is equal to

\( M(t;\, \mu,\sigma^2) = \operatorname{E}[e^{tX}] = \varphi(-it;\, \mu,\sigma^2) = e^{ \mu t + \frac12 \sigma^2 t^2 }. \)

The cumulant generating function is the logarithm of the moment generating function:

\( g(t;\,\mu,\sigma^2) = \ln M(t;\,\mu,\sigma^2) = \mu t + \frac{1}{2} \sigma^2 t^2. \)

Since this is a quadratic polynomial in t, only the first two cumulants are nonzero.

Moments

See also: List of integrals of Gaussian functions

The normal distribution has moments of all orders. That is, for a normally distributed X with mean μ and variance σ 2, the expectation E[|X|p] exists and is finite for all p such that Re[p] > −1. Usually we are interested only in moments of integer orders: p = 1, 2, 3, ….

Central moments are the moments of X around its mean μ. Thus, a central moment of order p is the expected value of (X − μ) p. Using standardization of normal random variables, this expectation will be equal to σ p · E[Zp], , where Z is standard normal.

\( \mathrm{E}\left[(X-\mu)^p\right] = \begin{cases} 0 & \text{if }p\text{ is odd,} \\ \sigma^p\,(p-1)!! & \text{if }p\text{ is even.} \end{cases} \)

Here n!! denotes the double factorial, that is the product of every odd number from n to 1.

Central absolute moments are the moments of |X − μ|. They coincide with regular moments for all even orders, but are nonzero for all odd p's.

\( \operatorname{E}\left[|X-\mu|^p\right] = \sigma^p(p-1)!! \cdot \left.\begin{cases} \sqrt{2/\pi} & \text{if }p\text{ is odd}, \\ 1 & \text{if }p\text{ is even}, \end{cases}\right\} = \sigma^p \cdot \frac{2^{\frac{p}{2}}\Gamma\left(\frac{p+1}{2}\right)}{\sqrt{\pi}} \)

The last formula is true for any non-integer p > −1.

Raw moments and raw absolute moments are the moments of X and |X| respectively. The formulas for these moments are much more complicated, and are given in terms of confluent hypergeometric functions 1F1 and U.

\( \begin{align} & \operatorname{E} \left[ X^p \right] = \sigma^p \cdot (-i\sqrt{2}\sgn\mu)^p \; U\left( {-\frac{1}{2}p},\, \frac{1}{2},\, -\frac{1}{2}(\mu/\sigma)^2 \right), \\ & \operatorname{E} \left[ |X|^p \right] = \sigma^p \cdot 2^{\frac p 2} \frac {\Gamma\left(\frac{1+p}{2}\right)}{\sqrt\pi}\; _1F_1\left( {-\frac{1}{2}p},\, \frac{1}{2},\, -\frac{1}{2}(\mu/\sigma)^2 \right). \\ \end{align} \)

These expressions remain valid even if p is not integer. See also generalized Hermite polynomials.

First two cumulants are equal to μ and σ 2 respectively, whereas all higher-order cumulants are equal to zero.

| Order | Raw moment | Central moment | Cumulant |

|---|---|---|---|

| 1 | μ | 0 | μ |

| 2 | μ2 + σ2 | σ 2 | σ 2 |

| 3 | μ3 + 3μσ2 | 0 | 0 |

| 4 | μ4 + 6μ2σ2 + 3σ4 | 3σ 4 | 0 |

| 5 | μ5 + 10μ3σ2 + 15μσ4 | 0 | 0 |

| 6 | μ6 + 15μ4σ2 + 45μ2σ4 + 15σ6 | 15σ 6 | 0 |

| 7 | μ7 + 21μ5σ2 + 105μ3σ4 + 105μσ6 | 0 | 0 |

| 8 | μ8 + 28μ6σ2 + 210μ4σ4 + 420μ2σ6 + 105σ8 | 105σ 8 | 0 |

Properties

Standardizing normal random variables

Because the normal distribution is a location-scale family, it is possible to relate all normal random variables to the standard normal. For example if X is normal with mean μ and variance σ2, then

\( Z = \frac{X - \mu}{\sigma} \)

has mean zero and unit variance, that is Z has the standard normal distribution. Conversely, having a standard normal random variable Z we can always construct another normal random variable with specific mean μ and variance σ2:

\( X = \sigma Z + \mu. \, \)

This "standardizing" transformation is convenient as it allows one to compute the PDF and especially the CDF of a normal distribution having the table of PDF and CDF values for the standard normal. They will be related via

\( F_X(x) = \Phi\left(\frac{x-\mu}{\sigma}\right), \quad f_X(x) = \frac{1}{\sigma}\,\phi\left(\frac{x-\mu}{\sigma}\right). \)

Standard deviation and confidence intervals

Dark blue is less than one standard deviation from the mean. For the normal distribution, this accounts for about 68% of the set, while two standard deviations from the mean (medium and dark blue) account for about 95%, and three standard deviations (light, medium, and dark blue) account for about 99.7%.

For more details on this topic, see 68-95-99.7 rule (Empirical Rule).

About 68% of values drawn from a normal distribution are within one standard deviation σ away from the mean; about 95% of the values lie within two standard deviations; and about 99.7% are within three standard deviations. This fact is known as the 68-95-99.7 rule, or the empirical rule, or the 3-sigma rule. To be more precise, the area under the bell curve between μ − nσ and μ + nσ is given by

\( F(\mu+n\sigma;\,\mu,\sigma^2) - F(\mu-n\sigma;\,\mu,\sigma^2) = \Phi(n)-\Phi(-n) = \mathrm{erf}\left(\frac{n}{\sqrt{2}}\right), \)

where erf is the error function. To 12 decimal places, the values for the 1-, 2-, up to 6-sigma points are:[16]

| n | \( \scriptstyle\;\mathrm{erf}\left(\frac{n}{\sqrt{2}}\right)\; \) | i.e. 1 minus ... | or 1 in ... |

|---|---|---|---|

| 1 | 0.682689492137 | 0.317310507863 | 3.15148718753 |

| 2 | 0.954499736104 | 0.045500263896 | 21.9778945080 |

| 3 | 0.997300203937 | 0.002699796063 | 370.398347345 |

| 4 | 0.999936657516 | 0.000063342484 | 15,787.1927673 |

| 5 | 0.999999426697 | 0.000000573303 | 1,744,277.89362 |

| 6 | 0.999999998027 | 0.000000001973 | 506,797,345.897 |

The next table gives the reverse relation of sigma multiples corresponding to a few often used values for the area under the bell curve. These values are useful to determine (asymptotic) confidence intervals of the specified levels based on normally distributed (or asymptotically normal) estimators:[17]

| \( \scriptstyle\;\mathrm{erf}\left(\frac{n}{\sqrt{2}}\right)\; \) | n | \( \scriptstyle\;\mathrm{erf}\left(\frac{n}{\sqrt{2}}\right)\; \) | n | |

|---|---|---|---|---|

| 0.80 | 1.281551565545 | 0.999 | 3.290526731492 | |

| 0.90 | 1.644853626951 | 0.9999 | 3.890591886413 | |

| 0.95 | 1.959963984540 | 0.99999 | 4.417173413469 | |

| 0.98 | 2.326347874041 | 0.999999 | 4.891638475699 | |

| 0.99 | 2.575829303549 | 0.9999999 | 5.326723886384 | |

| 0.995 | 2.807033768344 | 0.99999999 | 5.730728868236 | |

| 0.998 | 3.090232306168 | 0.999999999 | 6.109410204869 |

where the value on the left of the table is the proportion of values that will fall within a given interval and n is a multiple of the standard deviation that specifies the width of the interval.

Central limit theorem

As the number of discrete events increases, the function begins to resemble a normal distribution

Comparison of probability density functions, p(k) for the sum of n fair 6-sided dice to show their convergence to a normal distribution with increasing n, in accordance to the central limit theorem. In the bottom-right graph, smoothed profiles of the previous graphs are rescaled, superimposed and compared with a normal distribution (black curve).

Main article: Central limit theorem

The theorem states that under certain (fairly common) conditions, the sum of a large number of random variables will have an approximately normal distribution. For example if (x1, …, xn) is a sequence of iid random variables, each having mean μ and variance σ2, then the central limit theorem states that

\( \sqrt{n}\left( \frac{1}{n}\sum_{i=1}^n x_i - \mu \right)\ \xrightarrow{d}\ \mathcal{N}(0,\,\sigma^2). \)

The theorem will hold even if the summands xi are not iid, although some constraints on the degree of dependence and the growth rate of moments still have to be imposed.

The importance of the central limit theorem cannot be overemphasized. A great number of test statistics, scores, and estimators encountered in practice contain sums of certain random variables in them, even more estimators can be represented as sums of random variables through the use of influence functions — all of these quantities are governed by the central limit theorem and will have asymptotically normal distribution as a result.

Another practical consequence of the central limit theorem is that certain other distributions can be approximated by the normal distribution, for example:

- The binomial distribution B(n, p) is approximately normal N(np, np(1 − p)) for large n and for p not too close to zero or one.

- The Poisson(λ) distribution is approximately normal N(λ, λ) for large values of λ.

- The chi-squared distribution χ2(k) is approximately normal N(k, 2k) for large ks.

- The Student's t-distribution t(ν) is approximately normal N(0, 1) when ν is large.

Whether these approximations are sufficiently accurate depends on the purpose for which they are needed, and the rate of convergence to the normal distribution. It is typically the case that such approximations are less accurate in the tails of the distribution.

A general upper bound for the approximation error in the central limit theorem is given by the Berry–Esseen theorem, improvements of the approximation are given by the Edgeworth expansions.

Miscellaneous

- The family of normal distributions is closed under linear transformations. That is, if X is normally distributed with mean μ and variance σ2, then a linear transform aX + b (for some real numbers a and b) is also normally distributed:

- \( aX + b\ \sim\ \mathcal{N}(a\mu+b,\, a^2\sigma^2). \)

- \( aX_1 + bX_2 \ \sim\ \mathcal{N}(a\mu_1+b\mu_2,\, a^2\!\sigma_1^2 + b^2\sigma_2^2) \)

- The converse of (1) is also true: if X1 and X2 are independent and their sum X1 + X2 is distributed normally, then both X1 and X2 must also be normal.[18] This is known as Cramér's decomposition theorem. The interpretation of this property is that a normal distribution is only divisible by other normal distributions. Another application of this property is in connection with the central limit theorem: although the CLT asserts that the distribution of a sum of arbitrary non-normal iid random variables is approximately normal, the Cramér's theorem shows that it can never become exactly normal.[19]

- If the characteristic function φX of some random variable X is of the form φX(t) = eQ(t), where Q(t) is a polynomial, then the Marcinkiewicz theorem (named after Józef Marcinkiewicz) asserts that Q can be at most a quadratic polynomial, and therefore X a normal random variable.[20] The consequence of this result is that the normal distribution is the only distribution with a finite number (two) of non-zero cumulants.

- If X and Y are jointly normal and uncorrelated, then they are independent. The requirement that X and Y should be jointly normal is essential, without it the property does not hold.[citation needed][proof] For non-normal random variables uncorrelatedness does not imply independence.

- If X and Y are independent N(μ, σ 2) random variables, then X + Y and X − Y are also independent and identically distributed (this follows from the polarization identity).[21] This property uniquely characterizes normal distribution, as can be seen from the Bernstein's theorem: if X and Y are independent and such that X + Y and X − Y are also independent, then both X and Y must necessarily have normal distributions.

More generally, if X1, ..., Xn are independent random variables, then two linear combinations ∑akXk and ∑bkXk will be independent if and only if all Xk's are normal and ∑akbkσ 2

k = 0, where σ 2

k denotes the variance of Xk.[22] - The normal distribution is infinitely divisible:[23] for a normally distributed X with mean μ and variance σ2 we can find n independent random variables {X1, …, Xn} each distributed normally with means μ/n and variances σ2/n such that

- \( X_1 + X_2 + \cdots + X_n \ \sim\ \mathcal{N}(\mu, \sigma^2) \)

- The normal distribution is stable (with exponent α = 2): if X1, X2 are two independent N(μ, σ2) random variables and a, b are arbitrary real numbers, then

- \( aX_1 + bX_2 \ \sim\ \sqrt{a^2+b^2}\cdot X_3\ +\ (a+b-\sqrt{a^2+b^2})\mu, \)

- The Kullback–Leibler divergence between two normal distributions X1 ∼ N(μ1, σ21 )and X2 ∼ N(μ2, σ22 )is given by:[24]

- \( D_\mathrm{KL}( X_1 \,\|\, X_2 ) = \frac{(\mu_1 - \mu_2)^2}{2\sigma_2^2} \,+\, \frac12\left(\, \frac{\sigma_1^2}{\sigma_2^2} - 1 - \ln\frac{\sigma_1^2}{\sigma_2^2} \,\right)\ . \)

- \( H^2(X_1,X_2) = 1 \,-\, \sqrt{\frac{2\sigma_1\sigma_2}{\sigma_1^2+\sigma_2^2}} \; e^{-\frac{1}{4}\frac{(\mu_1-\mu_2)^2}{\sigma_1^2+\sigma_2^2}}\ . \)

- The Fisher information matrix for a normal distribution is diagonal and takes the form

- \( \mathcal I = \begin{pmatrix} \frac{1}{\sigma^2} & 0 \\ 0 & \frac{1}{2\sigma^4} \end{pmatrix} \)

- Normal distributions belongs to an exponential family with natural parameters \( \scriptstyle\theta_1=\frac{\mu}{\sigma^2} \) and \( \scriptstyle\theta_2=\frac{-1}{2\sigma^2} \), and natural statistics x and x2. The dual, expectation parameters for normal distribution are η1 = μ and η2 = μ2 + σ2.

- The conjugate prior of the mean of a normal distribution is another normal distribution.[25] Specifically, if x1, …, xn are iid N(μ, σ2) and the prior is μ ~ N(μ0, σ2

0), then the posterior distribution for the estimator of μ will be- \( \mu | x_1,\ldots,x_n\ \sim\ \mathcal{N}\left( \frac{\frac{\sigma^2}{n}\mu_0 + \sigma_0^2\bar{x}}{\frac{\sigma^2}{n}+\sigma_0^2},\ \left( \frac{n}{\sigma^2} + \frac{1}{\sigma_0^2} \right)^{\!-1} \right) , \)

- Of all probability distributions over the reals with mean μ and variance σ2, the normal distribution N(μ, σ2) is the one with the maximum entropy.[26]

- The family of normal distributions forms a manifold with constant curvature −1. The same family is flat with respect to the (±1)-connections ∇(e) and ∇(m).[27]

Related distributions

Operations on a single random variable

If X is distributed normally with mean μ and variance σ2, then

- The exponential of X is distributed log-normally: eX ~ lnN (μ, σ2).

- The absolute value of X has folded normal distribution: IXI ~ Nf (μ, σ2). If μ = 0 this is known as the half-normal distribution.

- The square of X/σ has the noncentral chi-squared distribution with one degree of freedom: X2/σ2 ~ χ21(μ2/σ2). If μ = 0, the distribution is called simply chi-squared.

- The distribution of the variable X restricted to an interval [a, b] is called the truncated normal distribution.

- (X − μ)−2 has a Lévy distribution with location 0 and scale σ−2.

Combination of two independent random variables

If X1 and X2 are two independent standard normal random variables, then

- Their sum and difference is distributed normally with mean zero and variance two: X1 ± X2 ∼ N(0, 2).

- Their product Z = X1·X2 follows the "product-normal" distribution[28] with density function fZ(z) = π−1K0(|z|), where K0 is the modified Bessel function of the second kind. This distribution is symmetric around zero, unbounded at z = 0, and has the characteristic function φZ(t) = (1 + t 2)−1/2.

- Their ratio follows the standard Cauchy distribution: X1 ÷ X2 ∼ Cauchy(0, 1).

- Their Euclidean norm \(\scriptstyle\sqrt{X_1^2\,+\,X_2^2} \) has the Rayleigh distribution, also known as the chi distribution with 2 degrees of freedom.

Combination of two or more independent random variables

- If X1, X2, …, Xn are independent standard normal random variables, then the sum of their squares has the chi-squared distribution with n degrees of freedom: \( \scriptstyle X_1^2 + \cdots + X_n^2\ \sim\ \chi_n^2. \).

- If X1, X2, …, Xn are independent normally distributed random variables with means μ and variances σ2, then their sample mean is independent from the sample standard deviation, which can be demonstrated using the Basu's theorem or Cochran's theorem. The ratio of these two quantities will have the Student's t-distribution with n − 1 degrees of freedom:

- \( t = \frac{\overline X - \mu}{S/\sqrt{n}} = \frac{\frac{1}{n}(X_1+\cdots+X_n) - \mu}{\sqrt{\frac{1}{n(n-1)}\left[(X_1-\overline X)^2+\cdots+(X_n-\overline X)^2\right]}} \ \sim\ t_{n-1}. )

- If X1, …, Xn, Y1, …, Ym are independent standard normal random variables, then the ratio of their normalized sums of squares will have the F-distribution with (n, m) degrees of freedom:

- \( F = \frac{\left(X_1^2+X_2^2+\cdots+X_n^2\right)/n}{\left(Y_1^2+Y_2^2+\cdots+Y_m^2\right)/m}\ \sim\ F_{n,\,m}. /)

Operations on the density function

The split normal distribution is most directly defined in terms of joining scaled sections of the density functions of different normal distributions and rescaling the density to integrate to one. The truncated normal distribution results from rescaling a section of a single density function.

Extensions

The notion of normal distribution, being one of the most important distributions in probability theory, has been extended far beyond the standard framework of the univariate (that is one-dimensional) case (Case 1). All these extensions are also called normal or Gaussian laws, so a certain ambiguity in names exists.

- Multivariate normal distribution describes the Gaussian law in the k-dimensional Euclidean space. A vector X ∈ Rk is multivariate-normally distributed if any linear combination of its components ∑k

j=1aj Xj has a (univariate) normal distribution. The variance of X is a k×k symmetric positive-definite matrix V. - Rectified Gaussian distribution a rectified version of normal distribution with all the negative elements reset to 0

- Complex normal distribution deals with the complex normal vectors. A complex vector X ∈ Ck is said to be normal if both its real and imaginary components jointly possess a 2k-dimensional multivariate normal distribution. The variance-covariance structure of X is described by two matrices: the variance matrix Γ, and the relation matrix C.

- Matrix normal distribution describes the case of normally distributed matrices.

- Gaussian processes are the normally distributed stochastic processes. These can be viewed as elements of some infinite-dimensional Hilbert space H, and thus are the analogues of multivariate normal vectors for the case k = ∞. A random element h ∈ H is said to be normal if for any constant a ∈ H the scalar product (a, h) has a (univariate) normal distribution. The variance structure of such Gaussian random element can be described in terms of the linear covariance operator K: H → H. Several Gaussian processes became popular enough to have their own names:

- Brownian motion,

- Brownian bridge,

- Ornstein–Uhlenbeck process.

- Gaussian q-distribution is an abstract mathematical construction which represents a "q-analogue" of the normal distribution.

- the q-Gaussian is an analogue of the Gaussian distribution, in the sense that it maximises the Tsallis entropy, and is one type of Tsallis distribution. Note that this distribution is different from the Gaussian q-distribution above.

One of the main practical uses of the Gaussian law is to model the empirical distributions of many different random variables encountered in practice. In such case a possible extension would be a richer family of distributions, having more than two parameters and therefore being able to fit the empirical distribution more accurately. The examples of such extensions are:

- Pearson distribution — a four-parametric family of probability distributions that extend the normal law to include different skewness and kurtosis values.

Normality tests

Main article: Normality tests

Normality tests assess the likelihood that the given data set {x1, …, xn} comes from a normal distribution. Typically the null hypothesis H0 is that the observations are distributed normally with unspecified mean μ and variance σ2, versus the alternative Ha that the distribution is arbitrary. A great number of tests (over 40) have been devised for this problem, the more prominent of them are outlined below:

- "Visual" tests are more intuitively appealing but subjective at the same time, as they rely on informal human judgement to accept or reject the null hypothesis.

- Q-Q plot — is a plot of the sorted values from the data set against the expected values of the corresponding quantiles from the standard normal distribution. That is, it's a plot of point of the form (Φ−1(pk), x(k)), where plotting points pk are equal to pk = (k − α)/(n + 1 − 2α) and α is an adjustment constant which can be anything between 0 and 1. If the null hypothesis is true, the plotted points should approximately lie on a straight line.

- P-P plot — similar to the Q-Q plot, but used much less frequently. This method consists of plotting the points (Φ(z(k)), pk), where \( \scriptstyle z_{(k)} = (x_{(k)}-\hat\mu)/\hat\sigma. /). For normally distributed data this plot should lie on a 45° line between (0, 0) and (1, 1).

- Wilk–Shapiro test employs the fact that the line in the Q-Q plot has the slope of σ. The test compares the least squares estimate of that slope with the value of the sample variance, and rejects the null hypothesis if these two quantities differ significantly.

- Normal probability plot (rankit plot)

- Moment tests:

- D'Agostino's K-squared test

- Jarque–Bera test

- Empirical distribution function tests:

- Lilliefors test (an adaptation of the Kolmogorov–Smirnov test)

- Anderson–Darling test

Estimation of parameters

It is often the case that we don't know the parameters of the normal distribution, but instead want to estimate them. That is, having a sample (x1, …, xn) from a normal N(μ, σ2) population we would like to learn the approximate values of parameters μ and σ2. The standard approach to this problem is the maximum likelihood method, which requires maximization of the log-likelihood function:

\( \ln\mathcal{L}(\mu,\sigma^2) = \sum_{i=1}^n \ln f(x_i;\,\mu,\sigma^2) = -\frac{n}{2}\ln(2\pi) - \frac{n}{2}\ln\sigma^2 - \frac{1}{2\sigma^2}\sum_{i=1}^n (x_i-\mu)^2. \)

Taking derivatives with respect to μ and σ2 and solving the resulting system of first order conditions yields the maximum likelihood estimates:

\( \hat{\mu} = \overline{x} \equiv \frac{1}{n}\sum_{i=1}^n x_i, \qquad \hat{\sigma}^2 = \frac{1}{n} \sum_{i=1}^n (x_i - \overline{x})^2. \)

Estimator \( \scriptstyle\hat\mu \) is called the sample mean, since it is the arithmetic mean of all observations. The statistic \( \scriptstyle\overline{x} \) is complete and sufficient for μ, and therefore by the Lehmann–Scheffé theorem,\( \scriptstyle\hat\mu \) is the uniformly minimum variance unbiased (UMVU) estimator.[29] In finite samples it is distributed normally:

\( \hat\mu \ \sim\ \mathcal{N}(\mu,\,\,\sigma^2\!\!\;/n). \)

The variance of this estimator is equal to the μμ-element of the inverse Fisher information matrix \( \scriptstyle\mathcal{I}^{-1} \). This implies that the estimator is finite-sample efficient. Of practical importance is the fact that the standard error of \scriptstyle\hat\mu is proportional to \( \scriptstyle1/\sqrt{n} \), that is, if one wishes to decrease the standard error by a factor of 10, one must increase the number of points in the sample by a factor of 100. This fact is widely used in determining sample sizes for opinion polls and the number of trials in Monte Carlo simulations.

From the standpoint of the asymptotic theory, \( \scriptstyle\hat\mu \) is consistent, that is, it converges in probability to μ as n → ∞. The estimator is also asymptotically normal, which is a simple corollary of the fact that it is normal in finite samples:

\( \sqrt{n}(\hat\mu-\mu) \ \xrightarrow{d}\ \mathcal{N}(0,\,\sigma^2). \)

The estimator \( \scriptstyle\hat\sigma^2 \) is called the sample variance, since it is the variance of the sample (x1, …, xn). In practice, another estimator is often used instead of the \( \scriptstyle\hat\sigma^2 \). This other estimator is denoted s2, and is also called the sample variance, which represents a certain ambiguity in terminology; its square root s is called the sample standard deviation. The estimator s2 differs from \( \scriptstyle\hat\sigma^2 \) by having (n − 1) instead of n in the denominator (the so called Bessel's correction):

\( s^2 = \frac{n}{n-1}\,\hat\sigma^2 = \frac{1}{n-1} \sum_{i=1}^n (x_i - \overline{x})^2. \)

The difference between s2 and \( \scriptstyle\hat\sigma^2 \) becomes negligibly small for large n's. In finite samples however, the motivation behind the use of s2 is that it is an unbiased estimator of the underlying parameter σ2, whereas \( \scriptstyle\hat\sigma^2 \) is biased. Also, by the Lehmann–Scheffé theorem the estimator s2 is uniformly minimum variance unbiased (UMVU),[29] which makes it the "best" estimator among all unbiased ones. However it can be shown that the biased estimator \( \scriptstyle\hat\sigma^2 \) is "better" than the s2 in terms of the mean squared error (MSE) criterion. In finite samples both s2 and \( \scriptstyle\hat\sigma^2 \) have scaled chi-squared distribution with (n − 1) degrees of freedom:

\( s^2 \ \sim\ \frac{\sigma^2}{n-1} \cdot \chi^2_{n-1}, \qquad \hat\sigma^2 \ \sim\ \frac{\sigma^2}{n} \cdot \chi^2_{n-1}\ . \)

The first of these expressions shows that the variance of s2 is equal to 2σ4/(n−1), which is slightly greater than the σσ-element of the inverse Fisher information matrix \( \scriptstyle\mathcal{I}^{-1} \) . Thus, s2 is not an efficient estimator for σ2, and moreover, since s2 is UMVU, we can conclude that the finite-sample efficient estimator for σ2 does not exist.

Applying the asymptotic theory, both estimators s2 and \scriptstyle\hat\sigma^2 are consistent, that is they converge in probability to σ2 as the sample size n → ∞. The two estimators are also both asymptotically normal:

\( \sqrt{n}(\hat\sigma^2 - \sigma^2) \simeq \sqrt{n}(s^2-\sigma^2)\ \xrightarrow{d}\ \mathcal{N}(0,\,2\sigma^4). \)

In particular, both estimators are asymptotically efficient for σ2.

By Cochran's theorem, for normal distribution the sample mean \scriptstyle\hat\mu and the sample variance s2 are independent, which means there can be no gain in considering their joint distribution. There is also a reverse theorem: if in a sample the sample mean and sample variance are independent, then the sample must have come from the normal distribution. The independence between \scriptstyle\hat\mu and s can be employed to construct the so-called t-statistic:

\( t = \frac{\hat\mu-\mu}{s/\sqrt{n}} = \frac{\overline{x}-\mu}{\sqrt{\frac{1}{n(n-1)}\sum(x_i-\overline{x})^2}}\ \sim\ t_{n-1} \)

This quantity t has the Student's t-distribution with (n − 1) degrees of freedom, and it is an ancillary statistic (independent of the value of the parameters). Inverting the distribution of this t-statistics will allow us to construct the confidence interval for μ;[30] similarly, inverting the χ2 distribution of the statistic s2 will give us the confidence interval for σ2:[31]

\( \begin{align} & \mu \in \left[\, \hat\mu + t_{n-1,\alpha/2}\, \frac{1}{\sqrt{n}}s,\ \ \hat\mu + t_{n-1,1-\alpha/2}\,\frac{1}{\sqrt{n}}s \,\right] \approx \left[\, \hat\mu - |z_{\alpha/2}|\frac{1}{\sqrt n}s,\ \ \hat\mu + |z_{\alpha/2}|\frac{1}{\sqrt n}s \,\right], \\ & \sigma^2 \in \left[\, \frac{(n-1)s^2}{\chi^2_{n-1,1-\alpha/2}},\ \ \frac{(n-1)s^2}{\chi^2_{n-1,\alpha/2}} \,\right] \approx \left[\, s^2 - |z_{\alpha/2}|\frac{\sqrt{2}}{\sqrt{n}}s^2,\ \ s^2 + |z_{\alpha/2}|\frac{\sqrt{2}}{\sqrt{n}}s^2 \,\right], \end{align} \)

where tk,p and χ 2

k,p are the pth quantiles of the t- and χ2-distributions respectively. These confidence intervals are of the level 1 − α, meaning that the true values μ and σ2 fall outside of these intervals with probability α. In practice people usually take α = 5%, resulting in the 95% confidence intervals. The approximate formulas in the display above were derived from the asymptotic distributions of \( \scriptstyle\hat\mu\) and s2. The approximate formulas become valid for large values of n, and are more convenient for the manual calculation since the standard normal quantiles zα/2 do not depend on n. In particular, the most popular value of α = 5%, results in |z0.025| = 1.96.

Bayesian analysis of the normal distribution

Bayesian analysis of normally-distributed data is complicated by the many different possibilities that may be considered:

- Either the mean, or the variance, or neither, may be considered a fixed quantity.

- When the variance is unknown, analysis may be done directly in terms of the variance, or in terms of the precision, the reciprocal of the variance. The reason for expressing the formulas in terms of precision is that the analysis of most cases is simplified.

- Both univariate and multivariate cases need to be considered.

- Either conjugate or improper prior distributions may be placed on the unknown variables.

- An additional set of cases occurs in Bayesian linear regression, where in the basic model the data is assumed to be normally-distributed, and normal priors are placed on the regression coefficients. The resulting analysis is similar to the basic cases of independent identically distributed data, but more complex.

The formulas for the non-linear-regression cases are summarized in the conjugate prior article.

Scalar form

The following auxiliary formula is useful for simplifying the posterior update equations, which otherwise become fairly tedious.

\( a(y-x)^2 + b(x-z)^2 = (a + b)\left(x - \frac{ay+bz}{a+b}\right)^2 + \frac{ab}{a+b}(y-z)^2 \)

This equation rewrites the sum of two quadratics in x by expanding the squares, grouping the terms in x, and completing the square. Note the following about the complex constant factors attached to some of the terms:

The factor \( \frac{ay+bz}{a+b} has the form of a weighted average of y and z.

\( \frac{ab}{a+b} = \frac{1}{\frac{1}{a}+\frac{1}{b}} = (a^{-1} + b^{-1})^{-1} \) . This shows that this factor can be thought of as resulting from a situation where the reciprocals of quantities a and b add directly, so to combine a and b themselves, it's necessary to reciprocate, add, and reciprocate the result again to get back into the original units. This is exactly the sort of operation performed by the harmonic mean, so it is not surprising that \frac{ab}{a+b} is one-half the harmonic mean of a and b.

Vector form

A similar formula can be written for the sum of two vector quadratics: If \( \mathbf{x}, \mathbf{y}, \mathbf{z} \) are vectors of length k, and \mathbf{A} and \mathbf{B} are symmetric, invertible matrices of size \( k\times k \), then

\( (\mathbf{y}-\mathbf{x})'\mathbf{A}(\mathbf{y}-\mathbf{x}) + (\mathbf{x}-\mathbf{z})'\mathbf{B}(\mathbf{x}-\mathbf{z}) = (\mathbf{x} - \mathbf{c})'(\mathbf{A}+\mathbf{B})(\mathbf{x} - \mathbf{c}) + (\mathbf{y} - \mathbf{z})'(\mathbf{A}^{-1} + \mathbf{B}^{-1})^{-1}(\mathbf{y} - \mathbf{z}) \)

where

\( \mathbf{c} = (\mathbf{A} + \mathbf{B})^{-1}(\mathbf{A}\mathbf{y} + \mathbf{B}\mathbf{z}) \)

Note that the form \mathbf{x}'\mathbf{A}\mathbf{x} is called a quadratic form and is a scalar:

\( \mathbf{x}'\mathbf{A}\mathbf{x} = \sum_{i,j}a_{ij} x_i x_j \)

In other words, it sums up all possible combinations of products of pairs of elements from \mathbf{x}, with a separate coefficient for each. In addition, since \( x_i x_j = x_j x_i \), only the sum \( a_{ij} + a_{ji} \) matters for any off-diagonal elements of \mathbf{A} , and there is no loss of generality in assuming that \( \mathbf{A} \) is symmetric. Furthermore, if \( \mathbf{A} \) is symmetric, then the form \( \mathbf{x}'\mathbf{A}\mathbf{y} = \mathbf{y}'\mathbf{A}\mathbf{x} \) .

The sum of differences from the mean

Another useful formula is as follows:

\( \sum_{i=1}^n (x_i-\mu)^2 = \sum_{i=1}^n(x_i-\bar{x})^2 + n(\bar{x} -\mu)^2 \)

where \( \bar{x} = \frac{1}{n}\sum_{i=1}^n x_i. \)

With known variance

For a set of i.i.d. normally-distributed data points X of size n where each individual point x follows \( x \sim \mathcal{N}(\mu, \sigma^2) \) with known variance σ2, the conjugate prior distribution is also normally-distributed.

This can be shown more easily by rewriting the variance as the precision, i.e. using \( \tau = 1/\sigma^2 \). Then if \( x \sim \mathcal{N}(\mu, \tau) \) and \( \mu \sim \mathcal{N}(\mu_0, \tau_0) \) , we proceed as follows.

First, the likelihood function is (using the formula above for the sum of differences from the mean):

\( \begin{align} p(\mathbf{X}|\mu,\tau) &= \prod_{i=1}^n \sqrt{\frac{\tau}{2\pi}} \exp\left(-\frac{1}{2}\tau(x_i-\mu)^2\right) \\ &= \left(\frac{\tau}{2\pi}\right)^{n/2} \exp\left(-\frac{1}{2}\tau \sum_{i=1}^n (x_i-\mu)^2\right) \\ &= \left(\frac{\tau}{2\pi}\right)^{n/2} \exp\left[-\frac{1}{2}\tau \left(\sum_{i=1}^n(x_i-\bar{x})^2 + n(\bar{x} -\mu)^2\right)\right] \end{align} \)

Then, we proceed as follows:

\( \begin{align} p(\mu|\mathbf{X}) \propto p(\mathbf{X}|\mu) p(\mu) & = \left(\frac{\tau}{2\pi}\right)^{n/2} \exp\left[-\frac{1}{2}\tau \left(\sum_{i=1}^n(x_i-\bar{x})^2 + n(\bar{x} -\mu)^2\right)\right] \sqrt{\frac{\tau_0}{2\pi}} \exp\left(-\frac{1}{2}\tau_0(\mu-\mu_0)^2\right) \\ &\propto \exp\left(-\frac{1}{2}\left(\tau\left(\sum_{i=1}^n(x_i-\bar{x})^2 + n(\bar{x} -\mu)^2\right) + \tau_0(\mu-\mu_0)^2\right)\right) \\ &\propto \exp\left(-\frac{1}{2}(n\tau(\bar{x}-\mu)^2 + \tau_0(\mu-\mu_0)^2)\right) \\ &= \exp\left(-\frac{1}{2}(n\tau + \tau_0)\left(\mu - \dfrac{n\tau \bar{x} + \tau_0\mu_0}{n\tau + \tau_0}\right)^2 + \frac{n\tau\tau_0}{n\tau+\tau_0}(\bar{x} - \mu_0)^2\right) \\ &\propto \exp\left(-\frac{1}{2}(n\tau + \tau_0)\left(\mu - \dfrac{n\tau \bar{x} + \tau_0\mu_0}{n\tau + \tau_0}\right)^2\right) \end{align} \)

In the above derivation, we used the formula above for the sum of two quadratics and eliminated all constant factors not involving \mu . The result is the kernel of a normal distribution, with mean \( \frac{n\tau \bar{x} + \tau_0\mu_0}{n\tau + \tau_0} and precision n\tau + \tau_0 , i.e.

\( p(\mu|\mathbf{X}) \sim \mathcal{N}\left(\frac{n\tau \bar{x} + \tau_0\mu_0}{n\tau + \tau_0}, n\tau + \tau_0\right) \)

This can be written as a set of Bayesian update equations for the posterior parameters in terms of the prior parameters:

\( \begin{align} \tau_0' &= \tau_0 + n\tau \\ \mu_0' &= \frac{n\tau \bar{x} + \tau_0\mu_0}{n\tau + \tau_0} \\ \bar{x} &= \frac{1}{n}\sum_{i=1}^n x_i \\ \end{align} \)

That is, to combine n data points with total precision of n\tau (or equivalently, total variance of \( n/\sigma^2) \) and mean of values \bar{x}, derive a new total precision simply by adding the total precision of the data to the prior total precision, and form a new mean through a precision-weighted average, i.e. a weighted average of the data mean and the prior mean, each weighted by the associated total precision. This makes logical sense if the precision is thought of as indicating the certainty of the observations: In the distribution of the posterior mean, each of the input components is weighted by its certainty, and the certainty of this distribution is the sum of the individual certainties. (For the intuition of this, compare the expression "the whole is (or is not) greater than the sum of its parts". In addition, consider that the knowledge of the posterior comes from a combination of the knowledge of the prior and likelihood, so it makes sense that we are more certain of it than of either of its components.)

The above formula reveals why it is more convenient to do Bayesian analysis of conjugate priors for the normal distribution in terms of the precision. The posterior precision is simply the sum of the prior and likelihood precisions, and the posterior mean is computed through a precision-weighted average, as described above. The same formulas can be written in terms of variance by reciprocating all the precisions, yielding the more ugly formulas

\( \begin{align} {\sigma^2_0}' &= \frac{1}{\frac{n}{\sigma^2} + \frac{1}{\sigma_0^2}} \\ \mu_0' &= \frac{\frac{n\bar{x}}{\sigma^2} + \frac{\mu_0}{\sigma_0^2}}{\frac{n}{\sigma^2} + \frac{1}{\sigma_0^2}} \\ \bar{x} &= \frac{1}{n}\sum_{i=1}^n x_i \\ \end{align} \)

With known mean

For a set of i.i.d. normally-distributed data points X of size n where each individual point x follows \( x \sim \mathcal{N}(\mu, \sigma^2) \) with known mean μ, the conjugate prior of the variance has an inverse gamma distribution or a scaled inverse chi-squared distribution. The two are equivalent except for having different parameterizations. The use of the inverse gamma is more common, but the scaled inverse chi-squared is more convenient, so we use it in the following derivation. The prior for σ2 is as follows:

\( p(\sigma^2|\nu_0,\sigma_0^2) = \frac{(\sigma_0^2\nu_0/2)^{\nu_0/2}}{\Gamma(\nu_0/2)}~ \frac{\exp\left[ \frac{-\nu_0 \sigma_0^2}{2 \sigma^2}\right]}{(\sigma^2)^{1+\nu_0/2}} \propto \frac{\exp\left[ \frac{-\nu_0 \sigma_0^2}{2 \sigma^2}\right]}{(\sigma^2)^{1+\nu_0/2}} \)

The likelihood function from above, written in terms of the variance, is:

\( \begin{align} p(\mathbf{X}|\mu,\sigma^2) &= \left(\frac{1}{2\pi\sigma^2}\right)^{n/2} \exp\left[-\frac{1}{2\sigma^2} \sum_{i=1}^n (x_i-\mu)^2\right] \\ &= \left(\frac{1}{2\pi\sigma^2}\right)^{n/2} \exp\left[-\frac{S}{2\sigma^2}\right] \end{align} \)

where \( S = \sum_{i=1}^n (x_i-\mu)^2. \)

Then:

\( \begin{align} p(\sigma^2|\mathbf{X}) \propto p(\mathbf{X}|\sigma^2) p(\sigma^2) & = \left(\frac{1}{2\pi\sigma^2}\right)^{n/2} \exp\left[-\frac{S}{2\sigma^2}\right] \frac{(\sigma_0^2\nu_0/2)^{\nu_0/2}}{\Gamma(\nu_0/2)}~ \frac{\exp\left[ \frac{-\nu_0 \sigma^2}{2 \sigma_0^2}\right]}{(\sigma^2)^{1+\nu_0/2}} \\ &\propto \left(\frac{1}{\sigma^2}\right)^{n/2} \frac{1}{(\sigma^2)^{1+\nu_0/2}} \exp\left[-\frac{S}{2\sigma^2} + \frac{-\nu_0 \sigma_0^2}{2 \sigma^2}\right] \\ &= \frac{1}{(\sigma^2)^{1+(\nu_0+n)/2}} \exp\left[-\frac{\nu_0 \sigma_0^2 + S}{2\sigma^2}\right] \\ \end{align} \)

This is also a scaled inverse chi-squared distribution, where

\( \begin{align} \nu_0' &= \nu_0 + n \\ \nu_0'{\sigma_0^2}' &= \nu_0 \sigma_0^2 + \sum_{i=1}^n (x_i-\mu)^2 \end{align} \)

or equivalently

\( \begin{align} \nu_0' &= \nu_0 + n \\ {\sigma_0^2}' &= \frac{\nu_0 \sigma_0^2 + \sum_{i=1}^n (x_i-\mu)^2}{\nu_0+n} \end{align} \)

Reparameterizing in terms of an inverse gamma distribution, the result is:

\( \begin{align} \alpha' &= \alpha + \frac{n}{2} \\ \beta' &= \beta + \frac{\sum_{i=1}^n (x_i-\mu)^2}{2} \end{align} \)

With unknown mean and variance

For a set of i.i.d. normally-distributed data points X of size n where each individual point x follows \) x \sim \mathcal{N}(\mu, \sigma^2) \) with unknown mean μ and variance σ2, the a combined (multivariate) conjugate prior is placed over the mean and variance, consisting of a normal-inverse-gamma distribution. Logically, this originates as follows:

From the analysis of the case with unknown mean but known variance, we see that the update equations involve sufficient statistics computed from the data consisting of the mean of the data points and the total variance of the data points, computed in turn from the known variance divided by the number of data points.

From the analysis of the case with unknown variance but known mean, we see that the update equations involve sufficient statistics over the data consisting of the number of data points and sum of squared deviations.

Keep in mind that the posterior update values serve as the prior distribution when further data is handled. Thus, we should logically think of our priors in terms of the sufficient statistics just described, with the same semantics kept in mind as much as possible.

To handle the case where both mean and variance are unknown, we could place independent priors over the mean and variance, with fixed estimates of the average mean, total variance, number of data points used to compute the variance prior, and sum of squared deviations. Note however that in reality, the total variance of the mean depends on the unknown variance, and the sum of squared deviations that goes into the variance prior (appears to) depend on the unknown mean. In practice, the latter dependence is relatively unimportant: Shifting the actual mean shifts the generated points by an equal amount, and on average the squared deviations will remain the same. This is not the case, however, with the total variance of the mean: As the unknown variance increases, the total variance of the mean will increase proportionately, and we would like to capture this dependence.

This suggests that we create a conditional prior of the mean on the unknown variance, with a hyperparameter specifying the mean of the pseudo-observations associated with the prior, and another parameter specifying the number of pseudo-observations. This number serves as a scaling parameter on the variance, making it possible to control the overall variance of the mean relative to the actual variance parameter. The prior for the variance also has two hyperparameters, one specifying the sum of squared deviations of the pseudo-observations associated with the prior, and another specifying once again the number of pseudo-observations. Note that each of the priors has a hyperparameter specifying the number of pseudo-observations, and in each case this controls the relative variance of that prior. These are given as two separate hyperparameters so that the variance (aka the confidence) of the two priors can be controlled separately.

This leads immediately to the normal-inverse-gamma distribution, which is defined as the product of the two distributions just defined, with conjugate priors used (an inverse gamma distribution over the variance, and a normal distribution over the mean, conditional on the variance) and with the same four parameters just defined.

The priors are normally defined as follows:

\( \begin{align} p(\mu|\sigma^2; \mu_0, n_0) &\sim \mathcal{N}(\mu_0,\sigma_0^2/n_0) \\ p(\sigma^2; \nu_0,\sigma_0^2) &\sim I\chi^2(\nu_0,\sigma_0^2) = IG(\nu_0/2, \nu_0\sigma_0^2/2) \end{align} \)

The update equations can be derived, and look as follows:

\( \begin{align} \bar{x} &= \frac{1}{n}\sum_{i=1}^n x_i \\ \mu_0' &= \frac{n_0\mu_0 + n\bar{x}}{n_0 + n} \\ n_0' &= n_0 + n \\ \nu_0' &= \nu_0 + n \\ \nu_0'{\sigma_0^2}' &= \nu_0 \sigma_0^2 + \sum_{i=1}^n (x_i-\bar{x})^2 + \frac{n_0 n}{n_0 + n}(\mu_0 - \bar{x})^2 \\ \end{align} \)

The respective numbers of pseudo-observations just add the number of actual observations to them. The new mean hyperparameter is once again a weighted average, this time weighted by the relative numbers of observations. Finally, the update for \( \nu_0'{\sigma_0^2}' \) is similar to the case with known mean, but in this case the sum of squared deviations is taken with respect to the observed data mean rather than the true mean, and as a result a new "interaction term" needs to be added to take care of the additional error source stemming from the deviation between prior and data mean.

Proof is as follows.

[Proof][show]

Occurrence

The occurrence of normal distribution in practical problems can be loosely classified into three categories:

Exactly normal distributions;

Approximately normal laws, for example when such approximation is justified by the central limit theorem; and

Distributions modeled as normal — the normal distribution being the distribution with maximum entropy for a given mean and variance.

Exact normality

The ground state of a quantum harmonic oscillator has the Gaussian distribution.

Certain quantities in physics are distributed normally, as was first demonstrated by James Clerk Maxwell. Examples of such quantities are:

Velocities of the molecules in the ideal gas. More generally, velocities of the particles in any system in thermodynamic equilibrium will have normal distribution, due to the maximum entropy principle.

Probability density function of a ground state in a quantum harmonic oscillator.

The position of a particle which experiences diffusion. If initially the particle is located at a specific point (that is its probability distribution is the dirac delta function), then after time t its location is described by a normal distribution with variance t, which satisfies the diffusion equation ∂∂t f(x,t) = 12 ∂2∂x2 f(x,t). If the initial location is given by a certain density function g(x), then the density at time t is the convolution of g and the normal PDF.

Approximate normality

Approximately normal distributions occur in many situations, as explained by the central limit theorem. When the outcome is produced by a large number of small effects acting additively and independently, its distribution will be close to normal. The normal approximation will not be valid if the effects act multiplicatively (instead of additively), or if there is a single external influence which has a considerably larger magnitude than the rest of the effects.

In counting problems, where the central limit theorem includes a discrete-to-continuum approximation and where infinitely divisible and decomposable distributions are involved, such as

Binomial random variables, associated with binary response variables;

Poisson random variables, associated with rare events;

Thermal light has a Bose–Einstein distribution on very short time scales, and a normal distribution on longer timescales due to the central limit theorem.

Assumed normality

Fisher iris versicolor sepalwidth.svg

“ I can only recognize the occurrence of the normal curve — the Laplacian curve of errors — as a very abnormal phenomenon. It is roughly approximated to in certain distributions; for this reason, and on account for its beautiful simplicity, we may, perhaps, use it as a first approximation, particularly in theoretical investigations. — Pearson (1901) ”

There are statistical methods to empirically test that assumption, see the above Normality tests section.

In biology, the logarithm of various variables tend to have a normal distribution, that is, they tend to have a log-normal distribution (after separation on male/female subpopulations), with examples including:

Measures of size of living tissue (length, height, skin area, weight);[32]

The length of inert appendages (hair, claws, nails, teeth) of biological specimens, in the direction of growth; presumably the thickness of tree bark also falls under this category;

Certain physiological measurements, such as blood pressure of adult humans.

In finance, in particular the Black–Scholes model, changes in the logarithm of exchange rates, price indices, and stock market indices are assumed normal (these variables behave like compound interest, not like simple interest, and so are multiplicative). Some mathematicians such as Benoît Mandelbrot have argued that log-Levy distributions which possesses heavy tails would be a more appropriate model, in particular for the analysis for stock market crashes.

Measurement errors in physical experiments are often modeled by a normal distribution. This use of a normal distribution does not imply that one is assuming the measurement errors are normally distributed, rather using the normal distribution produces the most conservative predictions possible given only knowledge about the mean and variance of the errors.[33]

Fitted cumulative normal distribution to October rainfalls

In standardized testing, results can be made to have a normal distribution. This is done by either selecting the number and difficulty of questions (as in the IQ test), or by transforming the raw test scores into "output" scores by fitting them to the normal distribution. For example, the SAT's traditional range of 200–800 is based on a normal distribution with a mean of 500 and a standard deviation of 100.

Many scores are derived from the normal distribution, including percentile ranks ("percentiles" or "quantiles"), normal curve equivalents, stanines, z-scores, and T-scores. Additionally, a number of behavioral statistical procedures are based on the assumption that scores are normally distributed; for example, t-tests and ANOVAs. Bell curve grading assigns relative grades based on a normal distribution of scores.

In hydrology the distribution of long duration river discharge or rainfall, e.g. monthly and yearly totals, is often thought to be practically normal according to the central limit theorem.[34] The blue picture illustrates an example of fitting the normal distribution to ranked October rainfalls showing the 90% confidence belt based on the binomial distribution. The rainfall data are represented by plotting positions as part of the cumulative frequency analysis.

Generating values from normal distribution

The bean machine, a device invented by Francis Galton, can be called the first generator of normal random variables. This machine consists of a vertical board with interleaved rows of pins. Small balls are dropped from the top and then bounce randomly left or right as they hit the pins. The balls are collected into bins at the bottom and settle down into a pattern resembling the Gaussian curve.

In computer simulations, especially in applications of the Monte-Carlo method, it is often desirable to generate values that are normally distributed. The algorithms listed below all generate the standard normal deviates, since a N(μ, σ2

) can be generated as X = μ + σZ, where Z is standard normal. All these algorithms rely on the availability of a random number generator U capable of producing uniform random variates.

The most straightforward method is based on the probability integral transform property: if U is distributed uniformly on (0,1), then Φ−1(U) will have the standard normal distribution. The drawback of this method is that it relies on calculation of the probit function Φ−1, which cannot be done analytically. Some approximate methods are described in Hart (1968) and in the erf article. Wichura[35] gives a fast algorithm for computing this function to 16 decimal places, which is used by R to compute random variates of the normal distribution.

An easy to program approximate approach, that relies on the central limit theorem, is as follows: generate 12 uniform U(0,1) deviates, add them all up, and subtract 6 — the resulting random variable will have approximately standard normal distribution. In truth, the distribution will be Irwin–Hall, which is a 12-section eleventh-order polynomial approximation to the normal distribution. This random deviate will have a limited range of (−6, 6).[36]

The Box–Muller method uses two independent random numbers U and V distributed uniformly on (0,1). Then the two random variables X and Y

\( \begin{align} & X = \sqrt{- 2 \ln U} \, \cos(2 \pi V) , \\ & Y = \sqrt{- 2 \ln U} \, \sin(2 \pi V) . \end{align} \)

will both have the standard normal distribution, and will be independent. This formulation arises because for a bivariate normal random vector (X Y) the squared norm X2 + Y2 will have the chi-squared distribution with two degrees of freedom, which is an easily generated exponential random variable corresponding to the quantity −2ln(U) in these equations; and the angle is distributed uniformly around the circle, chosen by the random variable V.

Marsaglia polar method is a modification of the Box–Muller method algorithm, which does not require computation of functions sin() and cos(). In this method U and V are drawn from the uniform (−1,1) distribution, and then S = U2 + V2 is computed. If S is greater or equal to one then the method starts over, otherwise two quantities

\( X = U\sqrt{\frac{-2\ln S}{S}}, \qquad Y = V\sqrt{\frac{-2\ln S}{S}} \)

are returned. Again, X and Y will be independent and standard normally distributed.

The Ratio method[37] is a rejection method. The algorithm proceeds as follows:

Generate two independent uniform deviates U and V;

Compute X = √8/e (V − 0.5)/U;

If X2 ≤ 5 − 4e1/4U then accept X and terminate algorithm;

If X2 ≥ 4e−1.35/U + 1.4 then reject X and start over from step 1;

If X2 ≤ −4 / lnU then accept X, otherwise start over the algorithm.

The ziggurat algorithm (Marsaglia & Tsang 2000) is faster than the Box–Muller transform and still exact. In about 97% of all cases it uses only two random numbers, one random integer and one random uniform, one multiplication and an if-test. Only in 3% of the cases where the combination of those two falls outside the "core of the ziggurat" a kind of rejection sampling using logarithms, exponentials and more uniform random numbers has to be employed.

There is also some investigation[citation needed] into the connection between the fast Hadamard transform and the normal distribution, since the transform employs just addition and subtraction and by the central limit theorem random numbers from almost any distribution will be transformed into the normal distribution. In this regard a series of Hadamard transforms can be combined with random permutations to turn arbitrary data sets into a normally distributed data.

Numerical approximations for the normal CDF

The standard normal CDF is widely used in scientific and statistical computing. The values Φ(x) may be approximated very accurately by a variety of methods, such as numerical integration, Taylor series, asymptotic series and continued fractions. Different approximations are used depending on the desired level of accuracy.

Abramowitz & Stegun (1964) give the approximation for Φ(x) for x > 0 with the absolute error |ε(x)| < 7.5·10−8 (algorithm 26.2.17):

\( \Phi(x) = 1 - \phi(x)\left(b_1t + b_2t^2 + b_3t^3 + b_4t^4 + b_5t^5\right) + \varepsilon(x), \qquad t = \frac{1}{1+b_0x}, \)

where ϕ(x) is the standard normal PDF, and b0 = 0.2316419, b1 = 0.319381530, b2 = −0.356563782, b3 = 1.781477937, b4 = −1.821255978, b5 = 1.330274429.

Hart (1968) lists almost a hundred of rational function approximations for the erfc() function. His algorithms vary in the degree of complexity and the resulting precision, with maximum absolute precision of 24 digits. An algorithm by West (2009) combines Hart's algorithm 5666 with a continued fraction approximation in the tail to provide a fast computation algorithm with a 16-digit precision.

W. J. Cody (1969) after recalling Hart68 solution is not suited for erf, gives a solution for both erf and erfc, with maximal relative error bound, via Rational Chebyshev Approximation. (Cody, W. J. (1969). "Rational Chebyshev Approximations for the Error Function", paper here).

Marsaglia (2004) suggested a simple algorithm[nb 2] based on the Taylor series expansion

\( \Phi(x) = \frac12 + \phi(x)\left( x + \frac{x^3}{3} + \frac{x^5}{3\cdot5} + \frac{x^7}{3\cdot5\cdot7} + \frac{x^9}{3\cdot5\cdot7\cdot9} + \cdots \right) \)

for calculating Φ(x) with arbitrary precision. The drawback of this algorithm is comparatively slow calculation time (for example it takes over 300 iterations to calculate the function with 16 digits of precision when x = 10).

The GNU Scientific Library calculates values of the standard normal CDF using Hart's algorithms and approximations with Chebyshev polynomials.

History

Development

Some authors[38][39] attribute the credit for the discovery of the normal distribution to de Moivre, who in 1738 [nb 3] published in the second edition of his "The Doctrine of Chances" the study of the coefficients in the binomial expansion of (a + b)n. De Moivre proved that the middle term in this expansion has the approximate magnitude of \( \scriptstyle 2/\sqrt{2\pi n} \) , and that "If m or ½n be a Quantity infinitely great, then the Logarithm of the Ratio, which a Term distant from the middle by the Interval ℓ, has to the middle Term, is \scriptstyle -\frac{2\ell\ell}{n}."[40] Although this theorem can be interpreted as the first obscure expression for the normal probability law, Stigler points out that de Moivre himself did not interpret his results as anything more than the approximate rule for the binomial coefficients, and in particular de Moivre lacked the concept of the probability density function.[41]

Carl Friedrich Gauss discovered the normal distribution in 1809 as a way to rationalize the method of least squares.

In 1809 Gauss published his monograph "Theoria motus corporum coelestium in sectionibus conicis solem ambientium" where among other things he introduces several important statistical concepts, such as the method of least squares, the method of maximum likelihood, and the normal distribution. Gauss used M, M′, M′′, … to denote the measurements of some unknown quantity V, and sought the "most probable" estimator: the one which maximizes the probability φ(M−V) · φ(M′−V) · φ(M′′−V) · … of obtaining the observed experimental results. In his notation φΔ is the probability law of the measurement errors of magnitude Δ. Not knowing what the function φ is, Gauss requires that his method should reduce to the well-known answer: the arithmetic mean of the measured values.[nb 4] Starting from these principles, Gauss demonstrates that the only law which rationalizes the choice of arithmetic mean as an estimator of the location parameter, is the normal law of errors:[42]

\( \varphi\mathit{\Delta} = \frac{h}{\surd\pi}\, e^{-\mathrm{hh}\Delta\Delta}, \)

where h is "the measure of the precision of the observations". Using this normal law as a generic model for errors in the experiments, Gauss formulates what is now known as the non-linear weighted least squares (NWLS) method.[43]

Marquis de Laplace proved the central limit theorem in 1810, consolidating the importance of the normal distribution in statistics.

Although Gauss was the first to suggest the normal distribution law, Laplace made significant contributions.[nb 5] It was Laplace who first posed the problem of aggregating several observations in 1774,[44] although his own solution led to the Laplacian distribution. It was Laplace who first calculated the value of the integral ∫ e−t ²dt = √π in 1782, providing the normalization constant for the normal distribution.[45] Finally, it was Laplace who in 1810 proved and presented to the Academy the fundamental central limit theorem, which emphasized the theoretical importance of the normal distribution.[46]

It is of interest to note that in 1809 an American mathematician Adrain published two derivations of the normal probability law, simultaneously and independently from Gauss.[47] His works remained largely unnoticed by the scientific community, until in 1871 they were "rediscovered" by Abbe.[48]

In the middle of the 19th century Maxwell demonstrated that the normal distribution is not just a convenient mathematical tool, but may also occur in natural phenomena:[49] "The number of particles whose velocity, resolved in a certain direction, lies between x and x + dx is

\( \mathrm{N}\; \frac{1}{\alpha\;\sqrt\pi}\; e^{-\frac{x^2}{\alpha^2}}dx \)

Naming

Since its introduction, the normal distribution has been known by many different names: the law of error, the law of facility of errors, Laplace's second law, Gaussian law, etc. Gauss himself apparently coined the term with reference to the "normal equations" involved in its applications, with normal having its technical meaning of orthogonal rather than "usual"[50]. However, by the end of the 19th century some authors[nb 6] had started using the name normal distribution, where the word "normal" was used as an adjective — the term now being seen as a reflection of the fact that this distribution was seen as typical, common - and thus "normal". Peirce (one of those authors) once defined "normal" thus: "...the 'normal' is not the average (or any other kind of mean) of what actually occurs, but of what would, in the long run, occur under certain circumstances."[51] Around the turn of the 20th century Pearson popularized the term normal as a designation for this distribution.[52]

“ Many years ago I called the Laplace–Gaussian curve the normal curve, which name, while it avoids an international question of priority, has the disadvantage of leading people to believe that all other distributions of frequency are in one sense or another 'abnormal'. — Pearson (1920) ”

Also, it was Pearson who first wrote the distribution in terms of the standard deviation σ as in modern notation. Soon after this, in year 1915, Fisher added the location parameter to the formula for normal distribution, expressing it in the way it is written nowadays:

\( df = \frac{1}{\sigma\sqrt{2\pi}}e^{-\frac{(x-m)^2}{2\sigma^2}}dx \)

The term "standard normal" which denotes the normal distribution with zero mean and unit variance came into general use around 1950s, appearing in the popular textbooks by P.G. Hoel (1947) "Introduction to mathematical statistics" and A.M. Mood (1950) "Introduction to the theory of statistics".[53]

When the name is used, the "Gaussian distribution" was named after Carl Friedrich Gauss, who introduced the distribution in 1809 as a way of rationalizing the method of least squares as outlined above. The related work of Laplace, also outlined above has led to the normal distribution being sometimes called Laplacian,[citation needed] especially in French-speaking countries. Among English speakers, both "normal distribution" and "Gaussian distribution" are in common use, with different terms preferred by different communities.

See also

Portal icon Statistics portal

Behrens–Fisher problem—the long-standing problem of testing whether two normal samples with different variances have same means;

Bhattacharyya distance - method used to separate mixtures of normal distributions

Erdős–Kac theorem—on the occurrence of the normal distribution in number theory

Gaussian blur—convolution which uses the normal distribution as a kernel

Sum of normally distributed random variables

Normally distributed and uncorrelated does not imply independent

Notes

^ The designation "bell curve" is ambiguous: there are many other distributions which are "bell"-shaped: the Cauchy distribution, Student's t-distribution, generalized normal, logistic, etc.

^ For example, this algorithm is given in the article Bc programming language.

^ De Moivre first published his findings in 1733, in a pamphlet "Approximatio ad Summam Terminorum Binomii (a + b)n in Seriem Expansi" that was designated for private circulation only. But it was not until the year 1738 that he made his results publicly available. The original pamphlet was reprinted several times, see for example Walker (1985).

^ "It has been customary certainly to regard as an axiom the hypothesis that if any quantity has been determined by several direct observations, made under the same circumstances and with equal care, the arithmetical mean of the observed values affords the most probable value, if not rigorously, yet very nearly at least, so that it is always most safe to adhere to it." — Gauss (1809, section 177)

^ "My custom of terming the curve the Gauss–Laplacian or normal curve saves us from proportioning the merit of discovery between the two great astronomer mathematicians." quote from Pearson (1905, p. 189)

^ Besides those specifically referenced here, such use is encountered in the works of Peirce, Galton[Full citation needed] and Lexis[Full citation needed] approximately around 1875.[citation needed]

Citations

^ Casella & Berger (2001, p. 102)

^ Gale Encyclopedia of Psychology — Normal Distribution

^ Cover, T. M.; Thomas, Joy A (2006). Elements of information theory. John Wiley and Sons. p. 254.

^ Park, Sung Y.; Bera, Anil K. (2009). "Maximum entropy autoregressive conditional heteroskedasticity model". Journal of Econometrics (Elsevier): 219–230. Retrieved 2011-06-02.

^ Halperin & et al. (1965, item 7)

^ McPherson (1990, p. 110)

^ Bernardo & Smith (2000)

^ a b c Patel & Read (1996, [2.1.4])

^ Fan (1991, p. 1258)

^ Patel & Read (1996, [2.1.8])

^ Scott, Clayton; Robert Nowak (August 7, 2003). "The Q-function". Connexions.

^ Barak, Ohad (April 6, 2006). "Q function and error function". Tel Aviv University.

^ Weisstein, Eric W., "Normal Distribution Function" from MathWorld.

^ Bryc (1995, p. 23)

^ Bryc (1995, p. 24)

^ WolframAlpha.com

^ part 1, part 2

^ Galambos & Simonelli (2004, Theorem 3.5)

^ Bryc (1995, p. 35)

^ Bryc (1995, p. 35)

^ Bryc (1995, p. 27)

^ Lukacs & King (1954)

^ Patel & Read (1996, [2.3.6])

^ http://www.allisons.org/ll/MML/KL/Normal/

^ "Stat260: Bayesian Modeling and Inference Lecture Date: February 8th, 2010, The Conjugate Prior for the Normal Distribution, Lecturer: Michael I. Jordan|".

^ Cover & Thomas (2006, p. 254)

^ Amari & Nagaoka (2000)

^ Mathworld entry for Normal Product Distribution

^ a b Krishnamoorthy (2006, p. 127)

^ Krishnamoorthy (2006, p. 130)

^ Krishnamoorthy (2006, p. 133)

^ Huxley (1932)

^ Jaynes, E. T. (2003). Probability Theory: The Logic of Science. Cambridge University Press. pp. 592–593.