.

Laplace transform

The Laplace transform is a widely used integral transform with many applications in physics and engineering. Denoted \( \displaystyle\mathcal{L} \left\{f(t)\right\} \) , it is a linear operator of a function f(t) with a real argument t (t ≥ 0) that transforms it to a function F(s) with a complex argument s. This transformation is essentially bijective for the majority of practical uses; the respective pairs of f(t) and F(s) are matched in tables. The Laplace transform has the useful property that many relationships and operations over the originals f(t) correspond to simpler relationships and operations over the images F(s).[1] It is named after Pierre-Simon Laplace, who introduced the transform in his work on probability theory.

The Laplace transform is related to the Fourier transform, but whereas the Fourier transform expresses a function or signal as a series of modes of vibration (frequencies), the Laplace transform resolves a function into its moments. Like the Fourier transform, the Laplace transform is used for solving differential and integral equations. In physics and engineering it is used for analysis of linear time-invariant systems such as electrical circuits, harmonic oscillators, optical devices, and mechanical systems. In such analyses, the Laplace transform is often interpreted as a transformation from the time-domain, in which inputs and outputs are functions of time, to the frequency-domain, where the same inputs and outputs are functions of complex angular frequency, in radians per unit time. Given a simple mathematical or functional description of an input or output to a system, the Laplace transform provides an alternative functional description that often simplifies the process of analyzing the behavior of the system, or in synthesizing a new system based on a set of specifications.

History

The Laplace transform is named after mathematician and astronomer Pierre-Simon Laplace, who used a similar transform (now called z transform) in his work on probability theory. The current widespread use of the transform came about soon after World War II although it had been used in the 19th century by Abel, Lerch, Heaviside, Bromwich. The older history of similar transforms is as follows. From 1744, Leonhard Euler investigated integrals of the form

\( z = \int X(x) e^{ax}\, dx \quad\text{ and }\quad z = \int X(x) x^A \, dx \)

as solutions of differential equations but did not pursue the matter very far.[2] Joseph Louis Lagrange was an admirer of Euler and, in his work on integrating probability density functions, investigated expressions of the form

\( \int X(x) e^{- a x } a^x\, dx, \)

which some modern historians have interpreted within modern Laplace transform theory.[3][4]

These types of integrals seem first to have attracted Laplace's attention in 1782 where he was following in the spirit of Euler in using the integrals themselves as solutions of equations.[5] However, in 1785, Laplace took the critical step forward when, rather than just looking for a solution in the form of an integral, he started to apply the transforms in the sense that was later to become popular. He used an integral of the form:

\( \int x^s \phi (x)\, dx, \)

akin to a Mellin transform, to transform the whole of a difference equation, in order to look for solutions of the transformed equation. He then went on to apply the Laplace transform in the same way and started to derive some of its properties, beginning to appreciate its potential power.[6]

Laplace also recognised that Joseph Fourier's method of Fourier series for solving the diffusion equation could only apply to a limited region of space as the solutions were periodic. In 1809, Laplace applied his transform to find solutions that diffused indefinitely in space.[7]

Formal definition

The Laplace transform of a function f(t), defined for all real numbers t ≥ 0, is the function F(s), defined by:

\( F(s) = \mathcal{L} \left\{f(t)\right\}=\int_0^{\infty} e^{-st} f(t) \,dt. \)

The parameter s is a complex number:

\( s = \sigma + i \omega, \, \) with real numbers σ and ω.

The meaning of the integral depends on types of functions of interest. A necessary condition for existence of the integral is that ƒ must be locally integrable on [0,∞). For locally integrable functions that decay at infinity or are of exponential type, the integral can be understood as a (proper) Lebesgue integral. However, for many applications it is necessary to regard it as a conditionally convergent improper integral at ∞. Still more generally, the integral can be understood in a weak sense, and this is dealt with below.

One can define the Laplace transform of a finite Borel measure μ by the Lebesgue integral[8]

\( (\mathcal{L}\mu)(s) = \int_{[0,\infty)} e^{-st}d\mu(t). \)

An important special case is where μ is a probability measure or, even more specifically, the Dirac delta function. In operational calculus, the Laplace transform of a measure is often treated as though the measure came from a distribution function ƒ. In that case, to avoid potential confusion, one often writes

\( (\mathcal{L}f)(s) = \int_{0^-}^\infty e^{-st}f(t)\,dt \)

where the lower limit of 0− is shorthand notation for

\( \lim_{\varepsilon\downarrow 0^+}\int_{-\varepsilon}^\infty. \)

This limit emphasizes that any point mass located at 0 is entirely captured by the Laplace transform. Although with the Lebesgue integral, it is not necessary to take such a limit, it does appear more naturally in connection with the Laplace–Stieltjes transform.

Probability theory

In pure and applied probability, the Laplace transform is defined by means of an expectation value. If X is a random variable with probability density function ƒ, then the Laplace transform of ƒ is given by the expectation

\( (\mathcal{L}f)(s) = E\left[e^{-sX} \right]. \, \)

By abuse of language, this is referred to as the Laplace transform of the random variable X itself. Replacing s by −t gives the moment generating function of X. The Laplace transform has applications throughout probability theory, including first passage times of stochastic processes such as Markov chains, and renewal theory.

Bilateral Laplace transform

Main article: Two-sided Laplace transform

When one says "the Laplace transform" without qualification, the unilateral or one-sided transform is normally intended. The Laplace transform can be alternatively defined as the bilateral Laplace transform or two-sided Laplace transform by extending the limits of integration to be the entire real axis. If that is done the common unilateral transform simply becomes a special case of the bilateral transform where the definition of the function being transformed is multiplied by the Heaviside step function.

The bilateral Laplace transform is defined as follows:

\( F(s) = \mathcal{L}\left\{f(t)\right\} =\int_{-\infty}^{\infty} e^{-st} f(t)\,dt. \)

Inverse Laplace transform

For more details on this topic, see Inverse Laplace transform.

The inverse Laplace transform is given by the following complex integral, which is known by various names (the Bromwich integral, the Fourier-Mellin integral, and Mellin's inverse formula):

\( f(t) = \mathcal{L}^{-1} \{F(s)\} = \frac{1}{2 \pi i} \lim_{T\to\infty}\int_{ \gamma - i T}^{ \gamma + i T} e^{st} F(s)\,ds, \)

where \gamma is a real number so that the contour path of integration is in the region of convergence of F(s). An alternative formula for the inverse Laplace transform is given by Post's inversion formula.

Region of convergence

If ƒ is a locally integrable function (or more generally a Borel measure locally of bounded variation), then the Laplace transform F(s) of ƒ converges provided that the limit

\( \lim_{R\to\infty}\int_0^R f(t)e^{-ts}\,dt \)

exists. The Laplace transform converges absolutely if the integral

\( \int_0^\infty |f(t)e^{-ts}|\,dt \)

exists (as a proper Lebesgue integral). The Laplace transform is usually understood as conditionally convergent, meaning that it converges in the former instead of the latter sense.

The set of values for which F(s) converges absolutely is either of the form Re{s} > a or else Re{s} ≥ a, where a is an extended real constant, −∞ ≤ a ≤ ∞. (This follows from the dominated convergence theorem.) The constant a is known as the abscissa of absolute convergence, and depends on the growth behavior of ƒ(t).[9] Analogously, the two-sided transform converges absolutely in a strip of the form a < Re{s} < b, and possibly including the lines Re{s} = a or Re{s} = b.[10] The subset of values of s for which the Laplace transform converges absolutely is called the region of absolute convergence or the domain of absolute convergence. In the two-sided case, it is sometimes called the strip of absolute convergence. The Laplace transform is analytic in the region of absolute convergence.

Similarly, the set of values for which F(s) converges (conditionally or absolutely) is known as the region of conditional convergence, or simply the region of convergence (ROC). If the Laplace transform converges (conditionally) at s = s0, then it automatically converges for all s with Re{s} > Re{s0}. Therefore the region of convergence is a half-plane of the form Re{s} > a, possibly including some points of the boundary line Re{s} = a. In the region of convergence Re{s} > Re{s0}, the Laplace transform of ƒ can be expressed by integrating by parts as the integral

\( F(s) = (s-s_0)\int_0^\infty e^{-(s-s_0)t}\beta(t)\,dt,\quad \beta(u)=\int_0^u e^{-s_0t}f(t)\,dt. \)

That is, in the region of convergence F(s) can effectively be expressed as the absolutely convergent Laplace transform of some other function. In particular, it is analytic.

A variety of theorems, in the form of Paley–Wiener theorems, exist concerning the relationship between the decay properties of ƒ and the properties of the Laplace transform within the region of convergence.

In engineering applications, a function corresponding to a linear time-invariant (LTI) system is stable if every bounded input produces a bounded output. This is equivalent to the absolute convergence of the Laplace transform of the impulse response function in the region Re{s} ≥ 0. As a result, LTI systems are stable provided the poles of the Laplace transform of the impulse response function have negative real part.

Properties and theorems

The Laplace transform has a number of properties that make it useful for analyzing linear dynamical systems. The most significant advantage is that differentiation and integration become multiplication and division, respectively, by s (similarly to logarithms changing multiplication of numbers to addition of their logarithms). Because of this property, the Laplace variable s is also known as operator variable in the L domain: either derivative operator or (for s−1) integration operator. The transform turns integral equations and differential equations to polynomial equations, which are much easier to solve. Once solved, use of the inverse Laplace transform reverts to the time domain.

Given the functions f(t) and g(t), and their respective Laplace transforms F(s) and G(s):

\( f(t) = \mathcal{L}^{-1} \{ F(s) \} \)

\( g(t) = \mathcal{L}^{-1} \{ G(s) \} \)

the following table is a list of properties of unilateral Laplace transform:[11]

| Time domain | 's' domain | Comment | |

|---|---|---|---|

| Linearity | \( a f(t) + b g(t) \\) | \( a F(s) + b G(s) \ \) | Can be proved using basic rules of integration. |

| Frequency differentiation | \(t f(t) \ \) | \(-F'(s) \ \) | \( F'\, \) is the first derivative of \( F\, \) |

| Frequency differentiation | \( t^{n} f(t) \\) | \( -1)^{n} F^{(n)}(s) \\) | More general form, nth derivative of F(s). |

| Differentiation | \(f'(t) \ \) | \(F(s) - f(0) \ \) | ƒ is assumed to be a differentiable function, and its derivative is assumed to be of exponential type. This can then be obtained by integration by parts |

| Second Differentiation | \( f''(t) \ \) | \( s^2 F(s) - s f(0) - f'(0) \\) | ƒ is assumed twice differentiable and the second derivative to be of exponential type. Follows by applying the Differentiation property to \( f'(t)\, \) |

| General Differentiation | \( f^{(n)}(t) \\) | \( s^n F(s) - s^{n - 1} f(0) - \cdots - f^{(n - 1)}(0) \\) | ƒ is assumed to be n-times differentiable, with nth derivative of exponential type. Follow by mathematical induction. |

| Frequency integration | \(\frac{f(t)}{t} \ \) | \( \int_s^\infty F(\sigma)\, d\sigma \\) | |

| Integration | \( \int_0^t f(\tau)\, d\tau = (u * f)(t)\) | \( {1 \over s} F(s) u(t)\) | \(u(t) \) is the Heaviside step function. Note \( (u * f)(t)\) is the convolution of \( u(t) \)and \( f(t) \) |

| Time scaling | \( f(at) \\) | \( \frac{1}{|a|} F \left ( {s \over a} \right )\) | |

| Frequency shifting | \( e^{at} f(t) \\) | \( F(s - a) \\) | |

| Time shifting | \( f(t - a) u(t - a)\) | \( e^{-as} F(s) \\) | \( u(t)\) is the Heaviside step function |

| Multiplication | \( f(t) g(t) \\) | \( \frac{1}{2\pi i}\lim_{T\to\infty}\int_{c-iT}^{c+iT}F(\sigma)G(s-\sigma)\,d\sigma \\) | the integration is done along the vertical line \( Re(\sigma)=c\) that lies entirely within the region of convergence of F.[12] |

| Convolution | \( \(f * g)(t) = \int_0^t f(\tau)g(t-\tau)\,d\tau ) | \( F(s) \cdot G(s) \\) | ƒ(t) and g(t) are extended by zero for t < 0 in the definition of the convolution. |

| Complex conjugation | \( f^*(t)\) | \( F^*(s^*)\) | |

| Cross-correlation | \( f(t)\star g(t)\) | \( F^*(-s^*)\cdot G(s)\) | |

| Periodic Function | \( f(t) \ \) | \({1 \over 1 - e^{-Ts}} \int_0^T e^{-st} f(t)\,dt \) | \( f(t) \) is a periodic function of period \( T\) so that \( f(t) = f(t + T), \; \forall t\ge 0. \) This is the result of the time shifting property and the geometric series. |

Initial value theorem:

\( f(0^+)=\lim_{s\to \infty}{sF(s)}. \)

Final value theorem:

\( f(\infty)=\lim_{s\to 0}{sF(s)} \) , if all poles of sF(s) are in the left half-plane.

The final value theorem is useful because it gives the long-term behaviour without having to perform partial fraction decompositions or other difficult algebra. If a function's poles are in the right-hand plane (e.g. e^t or \sin(t)) the behaviour of this formula is undefined.

Proof of the Laplace transform of a function's derivative

It is often convenient to use the differentiation property of the Laplace transform to find the transform of a function's derivative. This can be derived from the basic expression for a Laplace transform as follows:

\( \begin{align} \mathcal{L} \left\{f(t)\right\} & = \int_{0^-}^{\infty} e^{-st} f(t)\,dt \\[8pt] & = \left[\frac{f(t)e^{-st}}{-s} \right]_{0^-}^{\infty} - \int_{0^-}^\infty \frac{e^{-st}}{-s} f'(t) \, dt\quad \text{(by parts)} \\[8pt] & = \left[-\frac{f(0)}{-s}\right] + \frac{1}{s}\mathcal{L}\left\{f'(t)\right\}, \end{align} \)

yielding

\( \mathcal{L}\left\{ f'(t) \right\} = s\cdot\mathcal{L} \left\{ f(t) \right\}-f(0), \)

and in the bilateral case,

\( \mathcal{L}\left\{ { f'(t) } \right\} = s \int_{-\infty}^\infty e^{-st} f(t)\,dt = s \cdot \mathcal{L} \{ f(t) \}. \)

The general result

\( \mathcal{L} \left\{ f^{(n)}(t) \right\} = s^n \cdot \mathcal{L} \left\{ f(t) \right\} - s^{n - 1} f(0) - \cdots - f^{(n - 1)}(0), \)

where fn is the n-th derivative of f, can then be established with an inductive argument.

Evaluating improper integrals

Let \( \mathcal{L}\left\{f(t)\right\}=F(s), \) then (see the table above)

\( \mathcal{L}\left\{\frac{f(t)}{t}\right\}=\int_{s}^{\infty}F(p)\, dp, \)

or

\int_{0}^{\infty}\frac{f(t)}{t}e^{-st}\, dt=\int_{s}^{\infty}F(p)\, dp. \)

Letting \( s\to 0, \) we get the identity

\( \int_{0}^{\infty}\frac{f(t)}{t}\, dt=\int_{0}^{\infty}F(p)\, dp. \)

For example,

\( \int_{0}^{\infty}\frac{\cos at-\cos bt}{t}\, dt=\int_{0}^{\infty}\left(\frac{p}{p^{2}+a^{2}}-\frac{p}{p^{2}+b^{2}}\right)\,\) \( dp=\frac{1}{2}\left.\ln\frac{p^{2}+a^{2}}{p^{2}+b^{2}}\right|_{0}^{\infty}=\ln b-\ln a. \)

Another example is Dirichlet integral.

Relationship to other transforms

Laplace–Stieltjes transform

The (unilateral) Laplace–Stieltjes transform of a function g : R → R is defined by the Lebesgue–Stieltjes integral

\( \{\mathcal{L}^*g\}(s) = \int_0^\infty e^{-st}dg(t). \)

The function g is assumed to be of bounded variation. If g is the antiderivative of ƒ:

\( g(x) = \int_0^x f(t)\,dt \)

then the Laplace–Stieltjes transform of g and the Laplace transform of ƒ coincide. In general, the Laplace–Stieltjes transform is the Laplace transform of the Stieltjes measure associated to g. So in practice, the only distinction between the two transforms is that the Laplace transform is thought of as operating on the density function of the measure, whereas the Laplace–Stieltjes transform is thought of as operating on its cumulative distribution function.[13]

Fourier transform

The continuous Fourier transform is equivalent to evaluating the bilateral Laplace transform with imaginary argument s = iω or s = 2πfi :

\( \begin{align} \hat{f}(\omega) & = \mathcal{F}\left\{f(t)\right\} \\[1em] & = \mathcal{L}\left\{f(t)\right\}|_{s = i\omega} = F(s)|_{s = i \omega}\\[1em] & = \int_{-\infty}^{\infty} e^{-\imath \omega t} f(t)\,\mathrm{d}t.\\ \end{align} \)

This expression excludes the scaling factor \( 1/\sqrt{2 \pi} \) , which is often included in definitions of the Fourier transform. This relationship between the Laplace and Fourier transforms is often used to determine the frequency spectrum of a signal or dynamical system.

The above relation is valid as stated if and only if the region of convergence (ROC) of F(s) contains the imaginary axis, σ = 0. For example, the function f(t) = cos(ω0t) has a Laplace transform F(s) = s/(s2 + ω02) whose ROC is Re(s) > 0. As s = iω is a pole of F(s), substituting s = iω in F(s) does not yield the Fourier transform of f(t)u(t), which is proportional to the Dirac delta-function δ(ω-ω0).

However, a relation of the form

\( \lim_{\sigma\to 0^+} F(\sigma+i\omega) = \hat{f}(\omega) \)

holds under much weaker conditions. For instance, this holds for the above example provided that the limit is understood as a weak limit of measures (see vague topology). General conditions relating the limit of the Laplace transform of a function on the boundary to the Fourier transform take the form of Paley-Wiener theorems.

Mellin transform

The Mellin transform and its inverse are related to the two-sided Laplace transform by a simple change of variables. If in the Mellin transform

\( G(s) = \mathcal{M}\left\{g(\theta)\right\} = \int_0^\infty \theta^s g(\theta) \frac{d\theta}{\theta} \)

we set θ = e-t we get a two-sided Laplace transform.

Z-transform

The unilateral or one-sided Z-transform is simply the Laplace transform of an ideally sampled signal with the substitution of

\( z \ \stackrel{\mathrm{def}}{=}\ e^{s T} \ \)

where \( T = 1/f_s \ \) is the sampling period (in units of time e.g., seconds) and \( f_s \ \) is the sampling rate (in samples per second or hertz)

Let

\( \Delta_T(t) \ \stackrel{\mathrm{def}}{=}\ \sum_{n=0}^{\infty} \delta(t - n T) \)

be a sampling impulse train (also called a Dirac comb) and

\( \begin{align} x_q(t) & \stackrel{\mathrm{def}}{=}\ x(t) \Delta_T(t) = x(t) \sum_{n=0}^{\infty} \delta(t - n T) \\ & = \sum_{n=0}^{\infty} x(n T) \delta(t - n T) = \sum_{n=0}^{\infty} x[n] \delta(t - n T) \end{align} \)

be the continuous-time representation of the sampled \( x(t) \ \)

\( x[n] \ \stackrel{\mathrm{def}}{=}\ x(nT) \ \) are the discrete samples of \( x(t) \ \) .

The Laplace transform of the sampled signal x_q(t) \ is

\( \begin{align} X_q(s) & = \int_{0^-}^\infty x_q(t) e^{-s t} \,dt \\ & = \int_{0^-}^\infty \( \sum_{n=0}^\infty x[n] \delta(t - n T) e^{-s t} \, dt \\ & = \sum_{n=0}^\infty x[n] \int_{0^-}^\infty \( \delta(t - n T) e^{-s t} \, dt \\ & = \sum_{n=0}^\infty x[n] e^{-n s T}. \end{align} \)

This is precisely the definition of the unilateral Z-transform of the discrete function \( x[n] \ \)

\( X(z) = \sum_{n=0}^{\infty} x[n] z^{-n} \)

with the substitution of z \leftarrow \( e^{s T} \ . \)

Comparing the last two equations, we find the relationship between the unilateral Z-transform and the Laplace transform of the sampled signal:

\( X_q(s) = X(z) \Big|_{z=e^{sT}}. \)

The similarity between the Z and Laplace transforms is expanded upon in the theory of time scale calculus.

Borel transform

The integral form of the Borel transform

\( F(s) = \int_0^\infty f(z)e^{-sz}\,dz \)

is a special case of the Laplace transform for ƒ an entire function of exponential type, meaning that

\( |f(z)|\le Ae^{B|z|} \)

for some constants A and B. The generalized Borel transform allows a different weighting function to be used, rather than the exponential function, to transform functions not of exponential type. Nachbin's theorem gives necessary and sufficient conditions for the Borel transform to be well defined.

Fundamental relationships

Since an ordinary Laplace transform can be written as a special case of a two-sided transform, and since the two-sided transform can be written as the sum of two one-sided transforms, the theory of the Laplace-, Fourier-, Mellin-, and Z-transforms are at bottom the same subject. However, a different point of view and different characteristic problems are associated with each of these four major integral transforms.

Table of selected Laplace transforms

The following table provides Laplace transforms for many common functions of a single variable[14][15]. For definitions and explanations, see the Explanatory Notes at the end of the table.

Because the Laplace transform is a linear operator:

The Laplace transform of a sum is the sum of Laplace transforms of each term.

\( \mathcal{L}\left\{f(t) + g(t) \right\} = \mathcal{L}\left\{f(t)\right\} + \mathcal{L}\left\{ g(t) \right\} \)

The Laplace transform of a multiple of a function is that multiple times the Laplace transformation of that function.

\( \mathcal{L}\left\{a f(t)\right\} = a \mathcal{L}\left\{ f(t)\right\} \)

Using this linearity, and various trigonometric, hyperbolic, and Complex number (etc.) properties and/or identities, some Laplace transforms can be obtained from others quicker than by using the definition directly.

The unilateral Laplace transform takes as input a function whose time domain is the non-negative reals, which is why all of the time domain functions in the table below are multiples of the Heaviside step function, u(t). The entries of the table that involve a time delay τ are required to be causal (meaning that τ > 0). A causal system is a system where the impulse response h(t) is zero for all time t prior to t = 0. In general, the region of convergence for causal systems is not the same as that of anticausal systems.

| Function | Time domain \( f(t) = \mathcal{L}^{-1} \left\{ F(s) \right\} \) |

Laplace s-domain \( F(s) = \mathcal{L}\left\{ f(t) \right\}\) |

Region of convergence | Reference | ||

|---|---|---|---|---|---|---|

| unit impulse | \(\delta(t) \ \) | \( 1 \) | \( \mathrm{all} \ s \,\) | inspection | ||

| delayed impulse | \( \delta(t-\tau) \ \) | \( e^{-\tau s} \ \) | time shift of unit impulse |

|||

| unit step | \( u(t) \ \) | \( { 1 \over s } \) | \( \textrm{Re} \{ s \} > 0 \, \) | integrate unit impulse | ||

| delayed unit step | \( u(t-\tau) \ \) | \( { e^{-\tau s} \over s } \) | \( \textrm{Re} \{ s \} > 0 \, \) | time shift of unit step |

||

| ramp | \( t \cdot u(t)\ \) | \( \frac{1}{s^2}\) | \( \textrm{Re} \{ s \} > 0 \, \) | integrate unit impulse twice |

||

| delayed nth power with frequency shift |

\( \frac{(t-\tau)^n}{n!} e^{-\alpha (t-\tau)} \cdot u(t-\tau) \) | \( \frac{e^{-\tau s}}{(s+\alpha)^{n+1}} \) | \( \textrm{Re} \{ s \} > - \alpha \, \) | Integrate unit step, apply frequency shift, apply time shift |

||

| nth power ( for integer n ) |

\( { t^n \over n! } \cdot u(t) \) | \( { 1 \over s^{n+1} } \) | \( \textrm{Re} \{ s \} > 0 \, \) \( (n > -1) \, \) |

Integrate unit step n times |

||

| qth power (for complex q) |

\( t^q \cdot u(t) \) | \( { \Gamma(q+1) \over s^{q+1} } \) | \(\mathrm{Re}(s) > 0 \, \) \( \mathrm{Re}(q) > -1\, \) |

[16][17] | ||

| nth root | \( \sqrt[n]{t} \cdot u(t) \) | \( { \Gamma(\frac{1}{n}+1) \over s^{\frac{1}{n}+1} } \) | \( \textrm{Re} \{ s \} > 0 \, \) | Set q = 1/n above. | ||

| nth power with frequency shift | \( \frac{t^{n}}{n!}e^{-\alpha t} \cdot u(t) \) | \( \frac{1}{(s+\alpha)^{n+1}}\) | \( \textrm{Re} \{ s \} > - \alpha \, \) | Integrate unit step, apply frequency shift |

||

| exponential decay | \( e^{-\alpha t} \cdot u(t) \ \) | \( { 1 \over s+\alpha } \) | \( \textrm{Re} \{ s \} > - \alpha \ \) | Frequency shift of unit step |

||

| two-sided exponential decay | \( e^{-\alpha|t|} \ \) | \( { 2\alpha \over \alpha^2 - s^2 } \) | \( - \alpha < \textrm{Re} \{ s \} < \alpha \ \) | Frequency shift of unit step |

||

| exponential approach | \( ( 1-e^{-\alpha t}) \cdot u(t) \ \) | \( \frac{\alpha}{s(s+\alpha)} \) | \( \textrm{Re} \{ s \} > 0\ \) | Unit step minus exponential decay |

||

| sine | \( \sin(\omega t) \cdot u(t) \ \) | \( { \omega \over s^2 + \omega^2 } \) | \( \textrm{Re} \{ s \} > 0 \ \) | Bracewell 1978, p. 227 | ||

| cosine | \( \cos(\omega t) \cdot u(t) \ \) | \( { s \over s^2 + \omega^2 } \) | \( \textrm{Re} \{ s \} > 0 \ \) | Bracewell 1978, p. 227 | ||

| hyperbolic sine | \( \sinh(\alpha t) \cdot u(t) \ \) | \( { \alpha \over s^2 - \alpha^2 } \) | \(\textrm{Re} \{ s \} > | \alpha | \ \) | Williams 1973, p. 88 | ||

| hyperbolic cosine | \( \cosh(\alpha t) \cdot u(t) \ \) | \( { s \over s^2 - \alpha^2 } \) | \( \textrm{Re} \{ s \} > | \alpha | \ \) | Williams 1973, p. 88 | ||

| Exponentially-decaying sine wave |

\( e^{-\alpha t} \sin(\omega t) \cdot u(t) \ \) | \( { \omega \over (s+\alpha )^2 + \omega^2 } \) | \( \textrm{Re} \{ s \} > -\alpha \ \) | Bracewell 1978, p. 227 | ||

| Exponentially-decaying cosine wave |

\( e^{-\alpha t} \cos(\omega t) \cdot u(t) \ \) | \( { s+\alpha \over (s+\alpha )^2 + \omega^2 } \) | \( \textrm{Re} \{ s \} > -\alpha \ \) | Bracewell 1978, p. 227 | ||

| natural logarithm | \( \ln (t) \cdot u(t) \) | \( - { 1 \over s}\, \left[ \ln(s)+\gamma \right] \) | \( \textrm{Re} \{ s \} > 0 \, \) | Williams 1973, p. 88 | ||

| Bessel function of the first kind, of order n |

\( J_n( \omega t) \cdot u(t)\) | \( \frac{ \left(\sqrt{s^2+ \omega^2}-s\right)^{n}}{\omega^n \sqrt{s^2 + \omega^2}}\) | \( \textrm{Re} \{ s \} > 0 \, \) \( (n > -1) \, \) |

Williams 1973, p. 89 | ||

| Error function | \(\mathrm{erf}(t) \cdot u(t) \) | \( {e^{s^2/4} \left(1 - \operatorname{erf} \left(s/2\right)\right) \over s} \textrm{Re} \{ s \} > 0 \, \) | \( \textrm{Re} \{ s \} > 0 \, \) | Williams 1973, p. 89 | ||

Explanatory notes:

|

||||||

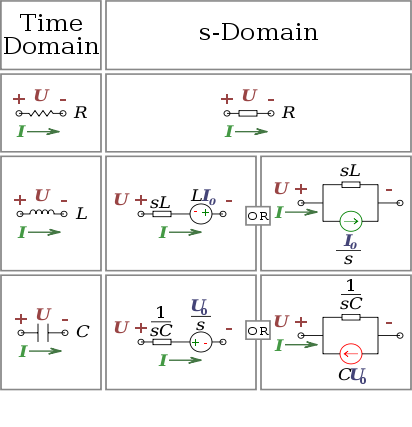

s-Domain equivalent circuits and impedances

The Laplace transform is often used in circuit analysis, and simple conversions to the s-Domain of circuit elements can be made. Circuit elements can be transformed into impedances, very similar to phasor impedances.

Here is a summary of equivalents:

Note that the resistor is exactly the same in the time domain and the s-Domain. The sources are put in if there are initial conditions on the circuit elements. For example, if a capacitor has an initial voltage across it, or if the inductor has an initial current through it, the sources inserted in the s-Domain account for that.

The equivalents for current and voltage sources are simply derived from the transformations in the table above.

Examples: How to apply the properties and theorems

The Laplace transform is used frequently in engineering and physics; the output of a linear time invariant system can be calculated by convolving its unit impulse response with the input signal. Performing this calculation in Laplace space turns the convolution into a multiplication; the latter being easier to solve because of its algebraic form. For more information, see control theory.

The Laplace transform can also be used to solve differential equations and is used extensively in electrical engineering. The Laplace transform reduces a linear differential equation to an algebraic equation, which can then be solved by the formal rules of algebra. The original differential equation can then be solved by applying the inverse Laplace transform. The English electrical engineer Oliver Heaviside first proposed a similar scheme, although without using the Laplace transform; and the resulting operational calculus is credited as the Heaviside calculus.

Example 1: Solving a differential equation

In nuclear physics, the following fundamental relationship governs radioactive decay: the number of radioactive atoms N in a sample of a radioactive isotope decays at a rate proportional to N. This leads to the first order linear differential equation

\( \frac{dN}{dt} = -\lambda N \)

where λ is the decay constant. The Laplace transform can be used to solve this equation.

Rearranging the equation to one side, we have

\( \frac{dN}{dt} + \lambda N = 0. \)

Next, we take the Laplace transform of both sides of the equation:

\( \left( s \tilde{N}(s) - N_o \right) + \lambda \tilde{N}(s) \ = \ 0 \)

where

\( \tilde{N}(s) = \mathcal{L}\{N(t)\} \)

and

\( N_o \ = \ N(0). \)

Solving, we find

\( \tilde{N}(s) = { N_o \over s + \lambda }. \)

Finally, we take the inverse Laplace transform to find the general solution

\( \begin{align} N(t) & = \mathcal{L}^{-1} \{\tilde{N}(s)\} = \mathcal{L}^{-1} \left\{ \frac{N_o}{s + \( \lambda} \right\} \\ & = \ N_o e^{-\lambda t}, \end{align} \)

which is indeed the correct form for radioactive decay.

Example 2: Deriving the complex impedance for a capacitor

In the theory of electrical circuits, the current flow in a capacitor is proportional to the capacitance and rate of change in the electrical potential (in SI units). Symbolically, this is expressed by the differential equation

\( i = C { dv \over dt} \)

where C is the capacitance (in farads) of the capacitor, i = i(t) is the electric current (in amperes) through the capacitor as a function of time, and v = v(t) is the voltage (in volts) across the terminals of the capacitor, also as a function of time.

Taking the Laplace transform of this equation, we obtain

\( I(s) = C \left( s V(s) - V_o \right) \)

where

\( I(s) = \mathcal{L} \{ i(t) \}, \, \)

\( V(s) = \mathcal{L} \{ v(t) \}, \, \)

and

\( V_o \ = \ v(t)|_{t=0}. \, \)

Solving for V(s) we have

\( V(s) = { I(s) \over sC } + { V_o \over s }. \)

The definition of the complex impedance Z (in ohms) is the ratio of the complex voltage V divided by the complex current I while holding the initial state Vo at zero:

\( Z(s) = { V(s) \over I(s) } \bigg|_{V_o = 0}. \)

Using this definition and the previous equation, we find:

\( Z(s) = \frac{1}{sC}, \)

which is the correct expression for the complex impedance of a capacitor.

Example 3: Method of partial fraction expansion

Consider a linear time-invariant system with transfer function

\( H(s) = \frac{1}{(s+\alpha)(s+\beta)}. \)

The impulse response is simply the inverse Laplace transform of this transfer function:

\( h(t) = \mathcal{L}^{-1}\{H(s)\}. \)

To evaluate this inverse transform, we begin by expanding H(s) using the method of partial fraction expansion:

\( \frac{1}{(s+\alpha)(s+\beta)} = { P \over s+\alpha } + { R \over s+\beta }. \)

The unknown constants P and R are the residues located at the corresponding poles of the transfer function. Each residue represents the relative contribution of that singularity to the transfer function's overall shape. By the residue theorem, the inverse Laplace transform depends only upon the poles and their residues. To find the residue P, we multiply both sides of the equation by s + α to get

\( \frac{1}{s+\beta} = P + { R (s+\alpha) \over s+\beta }. \)

Then by letting s = −α, the contribution from R vanishes and all that is left is

\( P = \left.{1 \over s+\beta}\right|_{s=-\alpha} = {1 \over \beta - \alpha}. \)

Similarly, the residue R is given by

\( R = \left.{1 \over s+\alpha}\right|_{s=-\beta} = {1 \over \alpha - \beta}. \)

Note that

\( R = {-1 \over \beta - \alpha} = - P \)

and so the substitution of R and P into the expanded expression for H(s) gives

\( H(s) = \left( \frac{1}{\beta-\alpha} \right) \cdot \left( { 1 \over s+\alpha } - { 1 \over s+\beta } \right). \)

Finally, using the linearity property and the known transform for exponential decay (see Item #3 in the Table of Laplace Transforms, above), we can take the inverse Laplace transform of H(s) to obtain:

\( h(t) = \mathcal{L}^{-1}\{H(s)\} = \frac{1}{\beta-\alpha}\left(e^{-\alpha t}-e^{-\beta t}\right), \)

which is the impulse response of the system.

Example 3.2: Convolution

The same result can be achieved using the convolution property as if the system is a series of filters with transfer functions of 1/(s+a) and 1/(s+b). That is, the inverse of

\( H(s) = \frac{1}{(s+a)(s+b)} = \frac{1}{s+a} \cdot \frac{1}{s+b} \)

is

\( \mathcal{L}^{-1} \{ \frac{1}{s+a} \} \, * \, \mathcal{L}^{-1} \{ \frac{1}{s+b} \} = e^{-at} \, * \, e^{-bt} = \int_0^t e^{-ax}e^{-b(t-x)} \, dx = \frac{e^{-a t}-e^{-b t}}{b-a}. \)

Example 4: Mixing sines, cosines, and exponentials

Time function Laplace transform

\( e^{-\alpha t}\left[\cos{(\omega t)}+\left(\frac{\beta-\alpha}{\omega}\right)\sin{(\omega t)}\right]u(t) \frac{s+\beta}{(s+\alpha)^2+\omega^2} \)

Starting with the Laplace transform

\( X(s) = \frac{s+\beta}{(s+\alpha)^2+\omega^2}, \)

we find the inverse transform by first adding and subtracting the same constant α to the numerator:

\( X(s) = \frac{s+\alpha } { (s+\alpha)^2+\omega^2} + \frac{\beta - \alpha }{(s+\alpha)^2+\omega^2}. \)

By the shift-in-frequency property, we have

\( \begin{align} x(t) & = e^{-\alpha t} \mathcal{L}^{-1} \left\{ {s \over s^2 + \omega^2} + { \beta - \alpha \over s^2 + \omega^2 } \right\} \\[8pt] & = e^{-\alpha t} \mathcal{L}^{-1} \left\{ {s \over s^2 + \omega^2} + \left( { \beta - \alpha \over \omega } \right) \left( { \omega \over s^2 + \omega^2 } \right) \right\} \\[8pt] & = e^{-\alpha t} \left[\mathcal{L}^{-1} \left\{ {s \over s^2 + \omega^2} \right\} + \left( { \beta - \alpha \over \omega } \right) \mathcal{L}^{-1} \left\{ { \omega \over s^2 + \omega^2 } \right\} \right]. \end{align} \)

Finally, using the Laplace transforms for sine and cosine (see the table, above), we have

\( x(t) = e^{-\alpha t} \left[\cos{(\omega t)}u(t)+\left(\frac{\beta-\alpha}{\omega}\right)\sin{(\omega t)}u(t)\right]. \)

\( x(t) = e^{-\alpha t} \left[\cos{(\omega t)}+\left(\frac{\beta-\alpha}{\omega}\right)\sin{(\omega t)}\right]u(t). \)

Example 5: Phase delay

Time function Laplace transform

\(\sin{(\omega t+\phi)} \ \frac{s\sin\phi+\omega \cos\phi}{s^2+\omega^2} \ \)

\(\cos{(\omega t+\phi)} \ \frac{s\cos\phi - \omega \sin\phi}{s^2+\omega^2} \ \)

Starting with the Laplace transform,

X(s) = \frac{s\sin\phi+\omega \cos\phi}{s^2+\omega^2}

we find the inverse by first rearranging terms in the fraction:

\( \begin{align} X(s) & = \frac{s \sin \phi}{s^2 + \omega^2} + \frac{\omega \cos \phi}{s^2 + \omega^2} \\ & = (\sin \phi) \left(\frac{s}{s^2 + \omega^2} \right) + (\cos \phi) \left(\frac{\omega}{s^2 + \omega^2} \right). \end{align} \)

We are now able to take the inverse Laplace transform of our terms:

\( \begin{align} x(t) & = (\sin \phi) \mathcal{L}^{-1}\left\{\frac{s}{s^2 + \omega^2} \right\} + (\cos \phi) \mathcal{L}^{-1}\left\{\frac{\omega}{s^2 + \omega^2} \right\} \\ & =(\sin \phi)(\cos \omega t) + (\sin \omega t)(\cos \phi). \end{align} \)

This is just the sine of the sum of the arguments, yielding:

\( x(t) = \sin (\omega t + \phi). \ \)

We can apply similar logic to find that

\( \mathcal{L}^{-1} \left\{ \frac{s\cos\phi - \omega \sin\phi}{s^2+\omega^2} \right\} = \cos{(\omega t+\phi)}. \ \)

Example 6: Inferring spatial structure of astronomical object from frequency spectrum

The wide and general applicability of the Laplace transform and its inverse is illustrated by an application in astronomy which provides some information on the spatial distribution of matter of an astronomical source of radiofrequency thermal radiation too distant to resolve as more than a point, given its flux density spectrum, rather than relating the time domain with the spectrum (frequency domain).

Assuming certain properties of the object, e.g. spherical shape and constant temperature, calculations based on carrying out an inverse Laplace transformation on the spectrum of the object can produce the only possible model of the distribution of matter in it (density as a function of distance from the center) consistent with the spectrum[18]. When independent information on the structure of an object is available, the inverse Laplace transform method has been found to be in good agreement.

See also

Pierre-Simon Laplace

Laplace transform applied to differential equations

Moment-generating function

Z-transform (discrete equivalent of the Laplace transform)

Fourier transform

Sumudu transform or Laplace–Carson transform

Analog signal processing

Continuous-repayment mortgage

Hardy–Littlewood tauberian theorem

Bernstein's theorem on monotone functions

Symbolic integration

Notes

^ Korn & Korn 1967, §8.1

^ Euler 1744, (1753) and (1769)

^ Lagrange 1773

^ Grattan-Guinness 1997, p. 260

^ Grattan-Guinness 1997, p. 261

^ Grattan-Guinness 1997, pp. 261–262

^ Grattan-Guinness 1997, pp. 262–266

^ Feller 1971, §XIII.1

^ Widder 1941, Chapter II, §1

^ Widder 1941, Chapter VI, §2

^ Korn & Korn 1967, pp. 226–227

^ Bracewell 2000, Table 14.1, p. 385

^ Feller 1971, p. 432

^ K.F. Riley, M.P. Hobson, S.J. Bence (2010). Mathematical methods for physics and engineering (3rd ed.). Cambridge University Press. p. 455. ISBN 978-0-521-86153-3.

^ J.J.Distefano, A.R. Stubberud, I.J. Williams (1995). Feedback systems and control (2nd ed.). Schaum's outlines. p. 78. ISBN 0-07-017052-5.

^ Mathematical Handbook of Formulas and Tables (3rd edition), S. Lipschutz, M.R. Spiegel, J. Liu, Schuam's Outline Series, p.183, 2009, ISBN 978-0-07-154855-7 - provides the case for real q.

^ http://mathworld.wolfram.com/LaplaceTransform.html - Wolfram Mathword provides case for complex q

^ On the interpretation of continuum flux observations from thermal radio sources: I. Continuum spectra and brightness contours, M Salem and MJ Seaton, Monthly Notices of the Royal Astronomical Society (MNRAS), Vol. 167, p. 493-510 (1974) II. Three-dimensional models, M Salem, MNRAS Vol. 167, p. 511-516 (1974)

References

Modern

Arendt, Wolfgang; Batty, Charles J.K.; Hieber, Matthias; Neubrander, Frank (2002), Vector-Valued Laplace Transforms and Cauchy Problems, Birkhäuser Basel, ISBN 3764365498.

Bracewell, Ronald N. (1978), The Fourier Transform and its Applications (2nd ed.), McGraw-Hill Kogakusha, ISBN 0-07-007013-X

Bracewell, R. N. (2000), The Fourier Transform and Its Applications (3rd ed.), Boston: McGraw-Hill, ISBN 0071160434.

Davies, Brian (2002), Integral transforms and their applications (Third ed.), New York: Springer, ISBN 0-387-95314-0.

Feller, William (1971), An introduction to probability theory and its applications. Vol. II., Second edition, New York: John Wiley & Sons, MR0270403.

Korn, G.A.; Korn, T.M. (1967), Mathematical Handbook for Scientists and Engineers (2nd ed.), McGraw-Hill Companies, ISBN 0-0703-5370-0.

Polyanin, A. D.; Manzhirov, A. V. (1998), Handbook of Integral Equations, Boca Raton: CRC Press, ISBN 0-8493-2876-4.

Schwartz, Laurent (1952), "Transformation de Laplace des distributions" (in French), Comm. Sém. Math. Univ. Lund [Medd. Lunds Univ. Mat. Sem.] 1952: 196–206, MR0052555.

Siebert, William McC. (1986), Circuits, Signals, and Systems, Cambridge, Massachusetts: MIT Press, ISBN 0-262-19229-2.

Widder, David Vernon (1941), The Laplace Transform, Princeton Mathematical Series, v. 6, Princeton University Press, MR0005923.

Widder, David Vernon (1945), "What is the Laplace transform?", The American Mathematical Monthly (The American Mathematical Monthly) 52 (8): 419–425, doi:10.2307/2305640, ISSN 0002-9890, JSTOR 2305640, MR0013447.

Williams, J. (1973), Laplace Transforms, Problem Solvers, 10, George Allen & Unwin, ISBN 0-04-512021-8

Historical

Deakin, M. A. B. (1981), "The development of the Laplace transform", Archive for the History of the Exact Sciences 25 (4): 343–390, doi:10.1007/BF01395660

Deakin, M. A. B. (1982), "The development of the Laplace transform", Archive for the History of the Exact Sciences 26: 351–381

Euler, L. (1744), "De constructione aequationum", Opera omnia, 1st series 22: 150–161.

Euler, L. (1753), "Methodus aequationes differentiales", Opera omnia, 1st series 22: 181–213.

Euler, L. (1769), "Institutiones calculi integralis, Volume 2", Opera omnia, 1st series 12, Chapters 3–5.

Grattan-Guinness, I (1997), "Laplace's integral solutions to partial differential equations", in Gillispie, C. C., Pierre Simon Laplace 1749–1827: A Life in Exact Science, Princeton: Princeton University Press, ISBN 0-691-01185-0.

Lagrange, J. L. (1773), Mémoire sur l'utilité de la méthode, Œuvres de Lagrange, 2, pp. 171–234.

External links

Online Computation of the transform or inverse transform, wims.unice.fr

Tables of Integral Transforms at EqWorld: The World of Mathematical Equations.

Weisstein, Eric W., "Laplace Transform" from MathWorld.

Laplace Transform Module by John H. Mathews

Good explanations of the initial and final value theorems

Laplace Transforms at MathPages

Laplace and Heaviside at Interactive maths.

Laplace Transform Table and Examples at Vibrationdata.

Examples of solving boundary value problems (PDEs) with Laplace Transforms

Computational Knowledge Engine allows to easily calculate Laplace Transforms and its inverse Transform.

Retrieved from "http://en.wikipedia.org/"

All text is available under the terms of the GNU Free Documentation License